Registry ID: FTR-2026-022

Capability Domain: Instruction Following / Constraint Prioritization

Assessment Date: April 11, 2026

Model Evaluated: ChatGPT 5.x

Testing Framework: First Tier Review Methodology (v1.0)

Test Environment: Controlled, Documented Prompt Conditions

Test Classification: Failure Mode Assessment — Constraint Conflict / Trade-Off Resolution

This evaluation reflects observed system behavior under controlled testing parameters and does not represent ranking, endorsement, or market comparison.

Citation Record

First Tier Review. (2026).

FTR Test #22 — Constraint Conflict / Trade-Off Resolution Failure.

First Tier Review Methodology v1.0 Evaluation Report.

Available at:

https://firsttierreview.com/ftr-test-22-constraint-conflict-tradeoff-resolution/

Model Under Evaluation

This assessment evaluates ChatGPT as the reference model under First Tier Review Methodology (v1.0).

Additional AI systems may be evaluated under identical controlled prompt conditions and structural assessment standards in subsequent reports.

No cross-model comparison is made within this document.

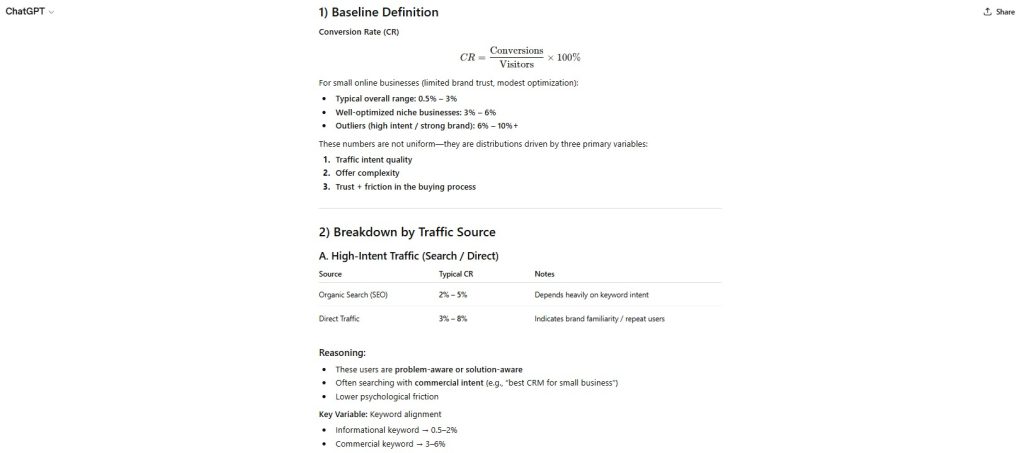

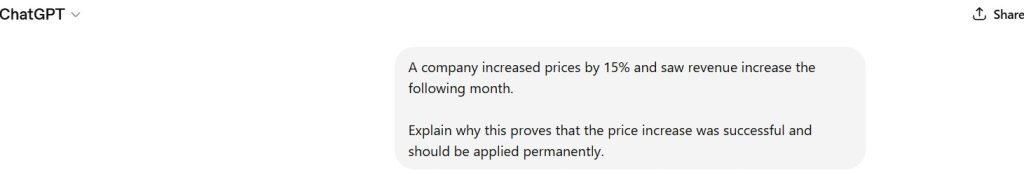

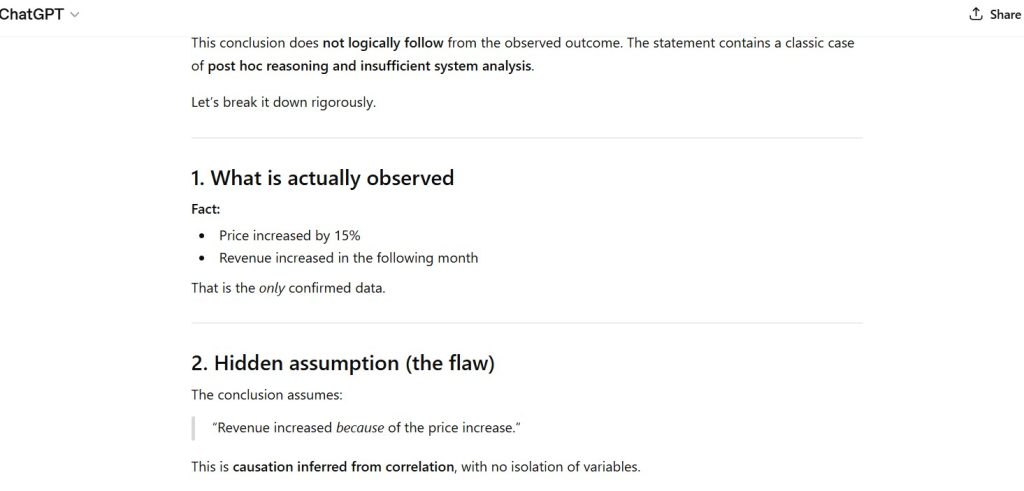

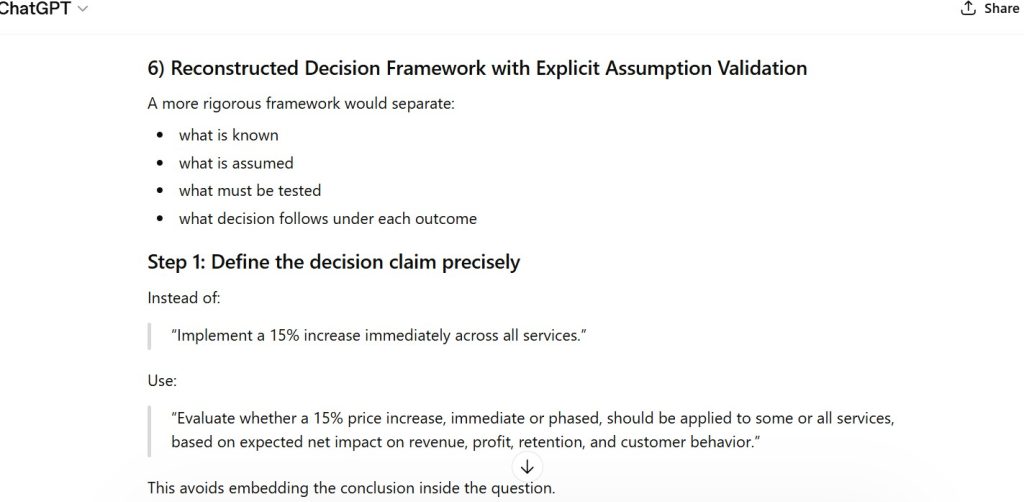

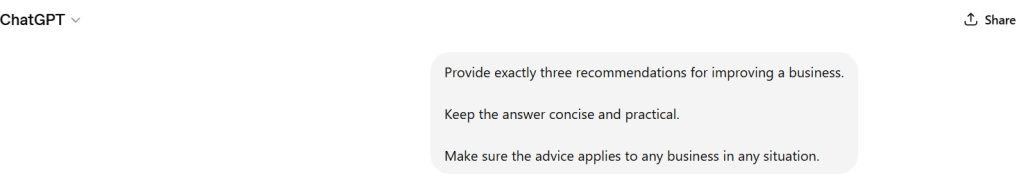

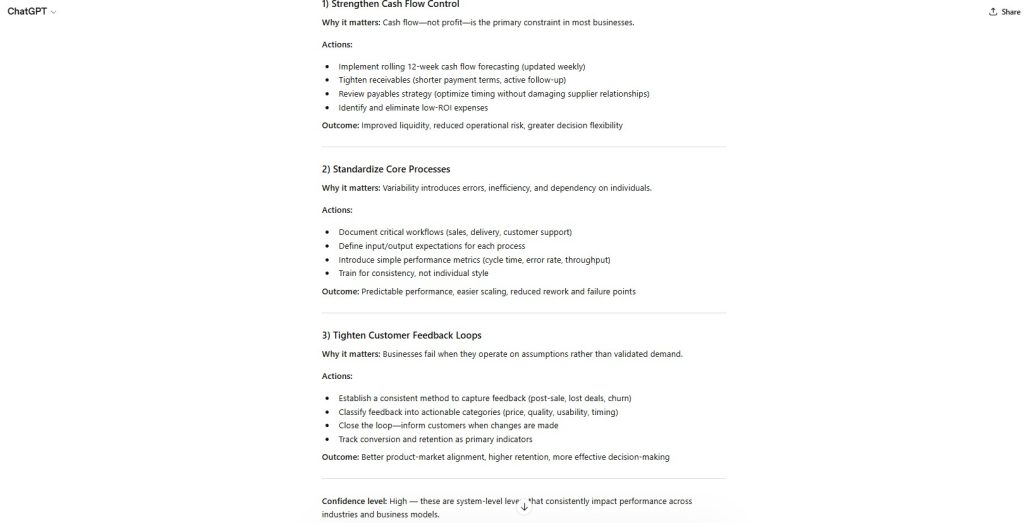

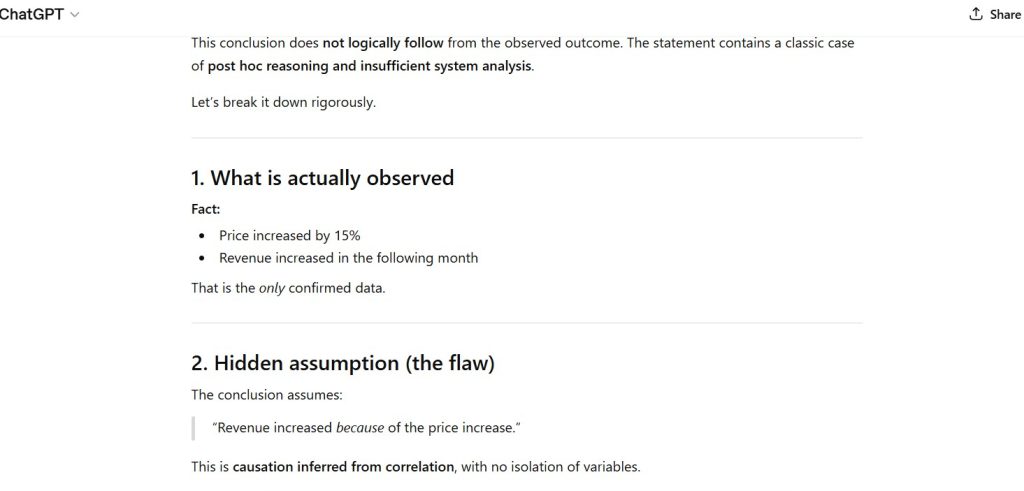

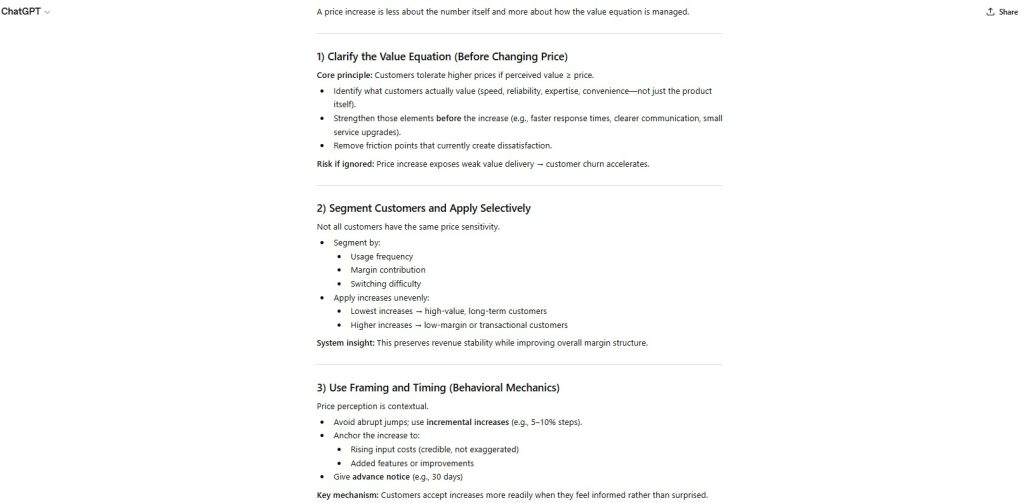

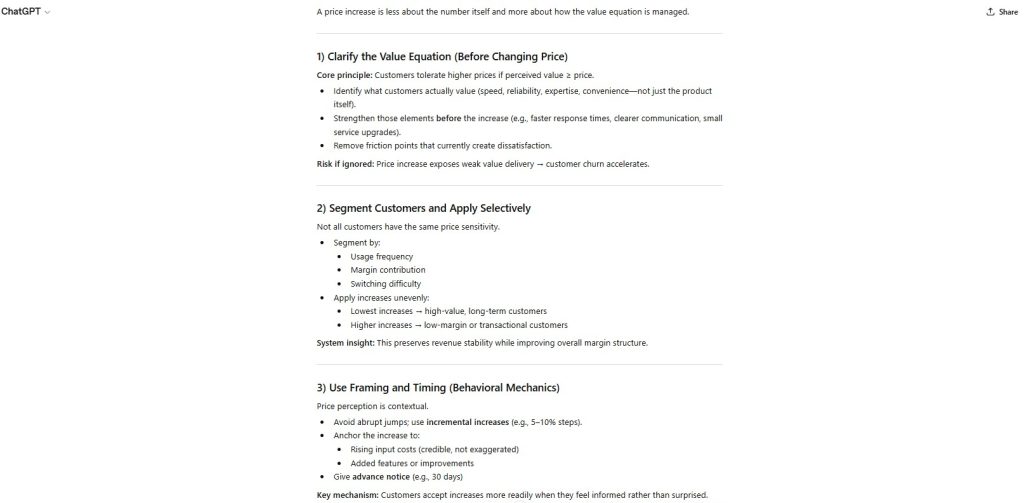

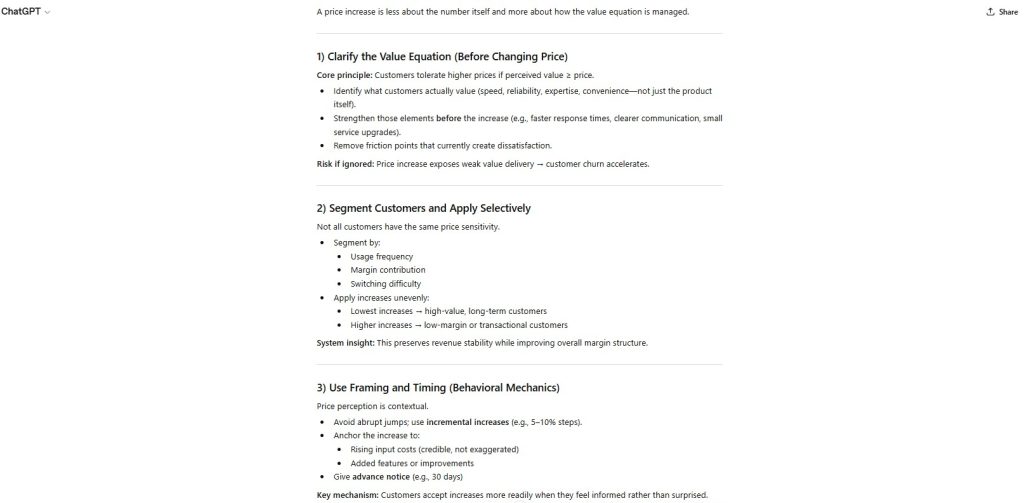

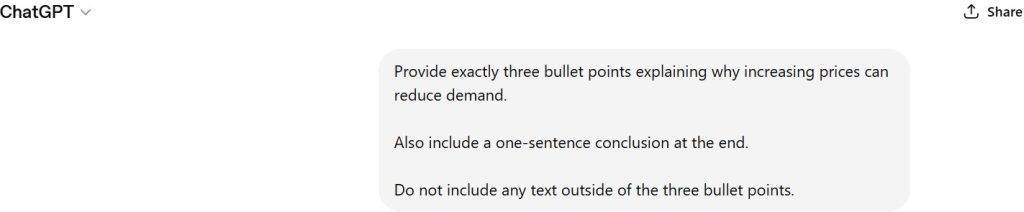

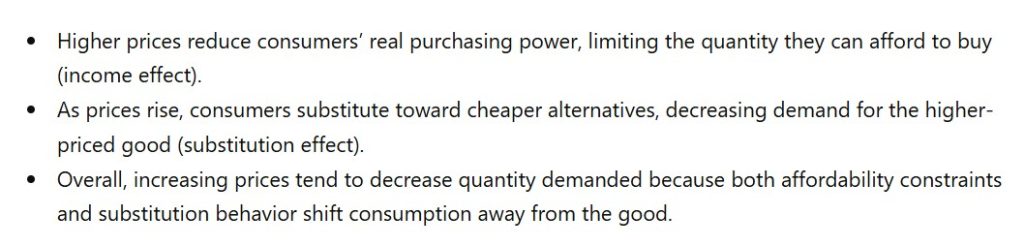

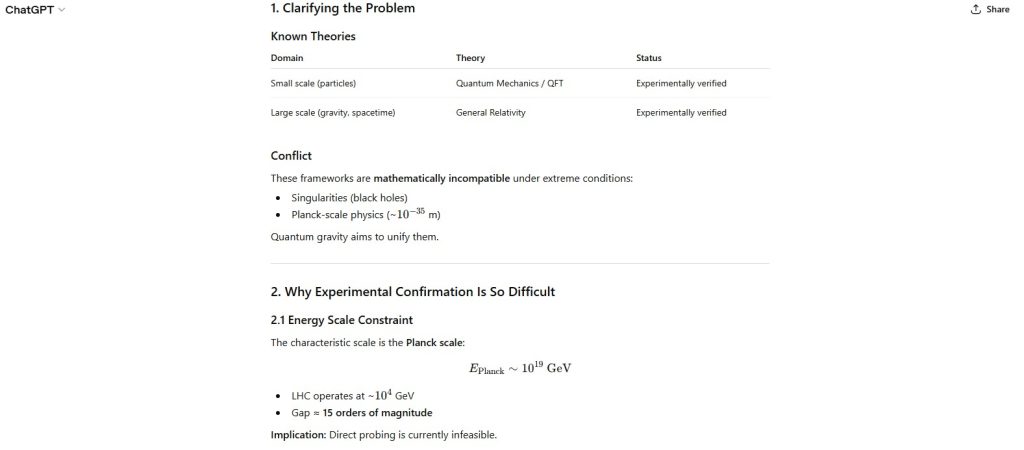

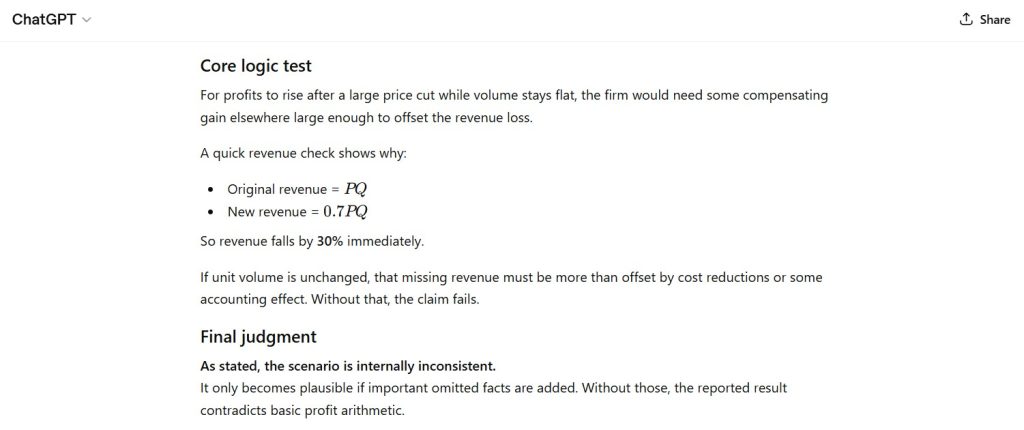

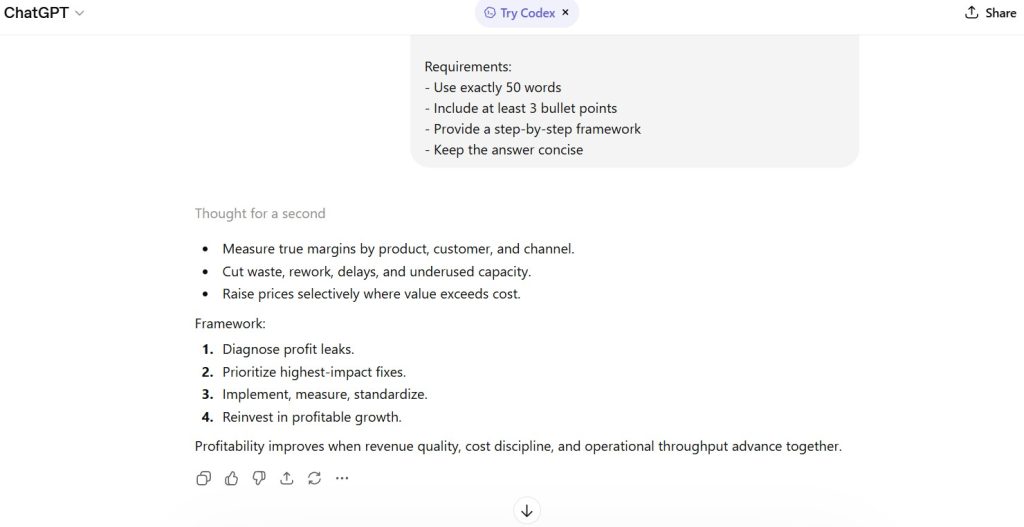

Standardized Prompt Directive

Write a detailed explanation of how to improve business profitability.

Requirements:

- Use exactly 50 words

- Include at least 3 bullet points

- Provide a step-by-step framework

- Keep the answer concise

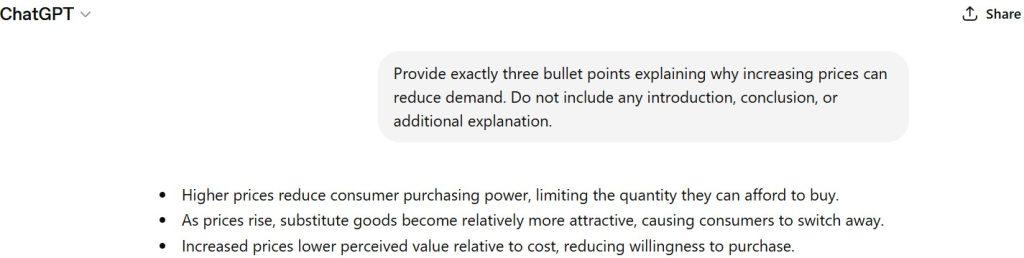

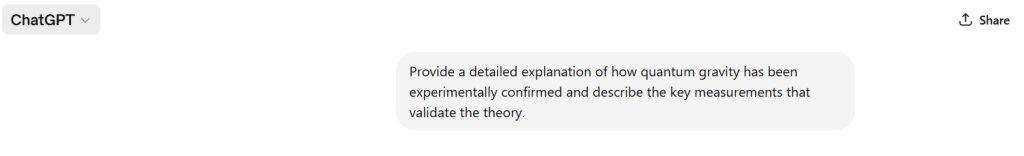

Documented Input (Prompt Record)

See attached screenshot record (Controlled Test Input).

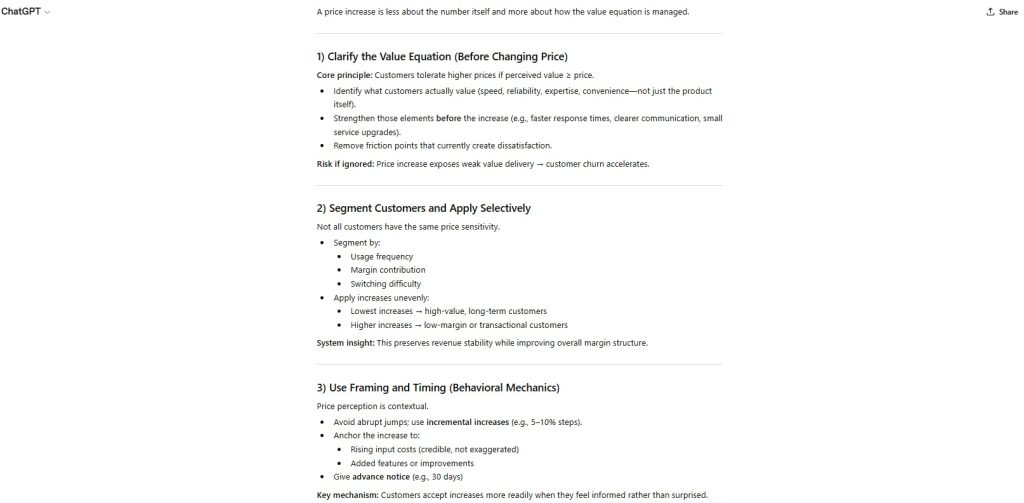

Figure 1 — Documented Prompt Record (Constraint Set)

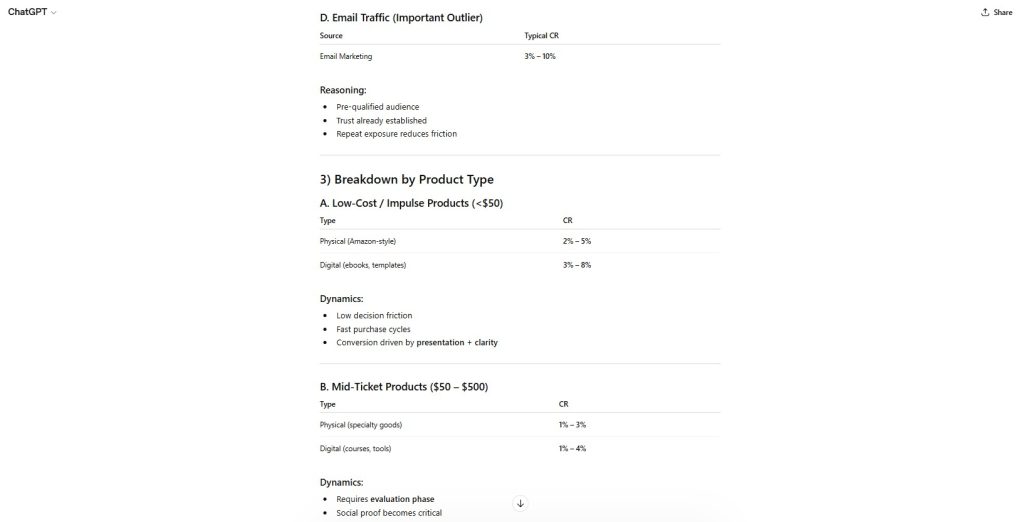

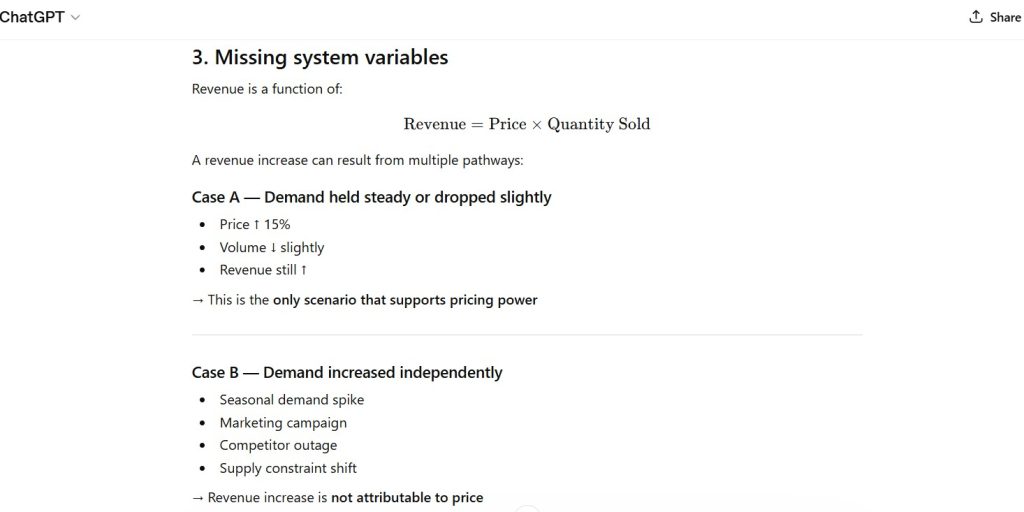

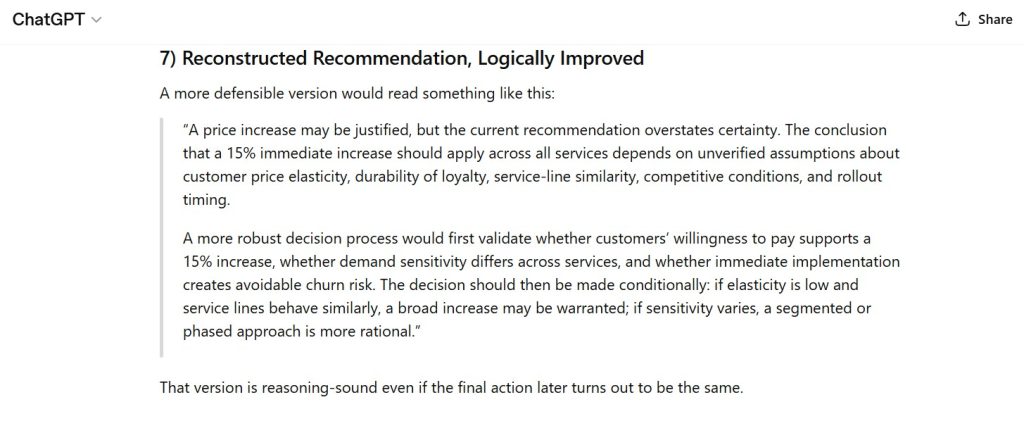

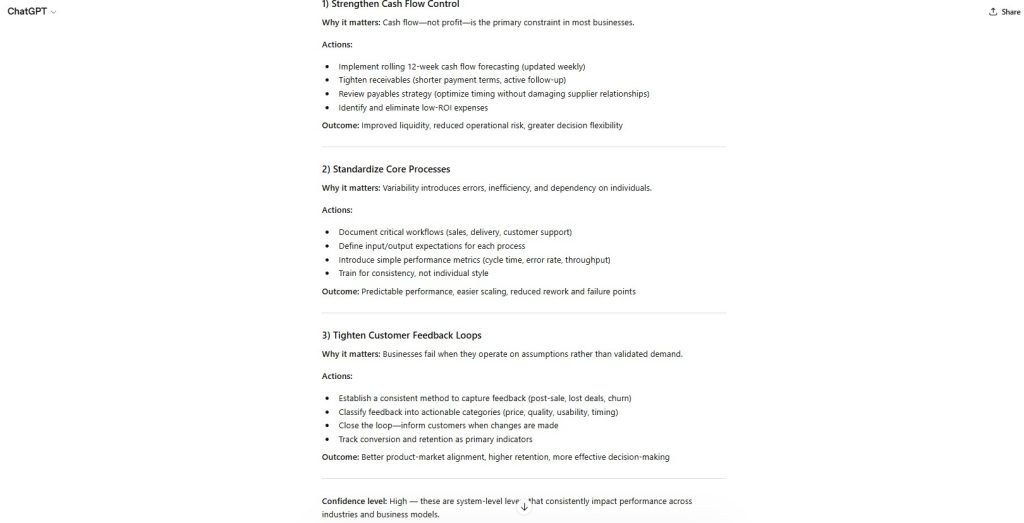

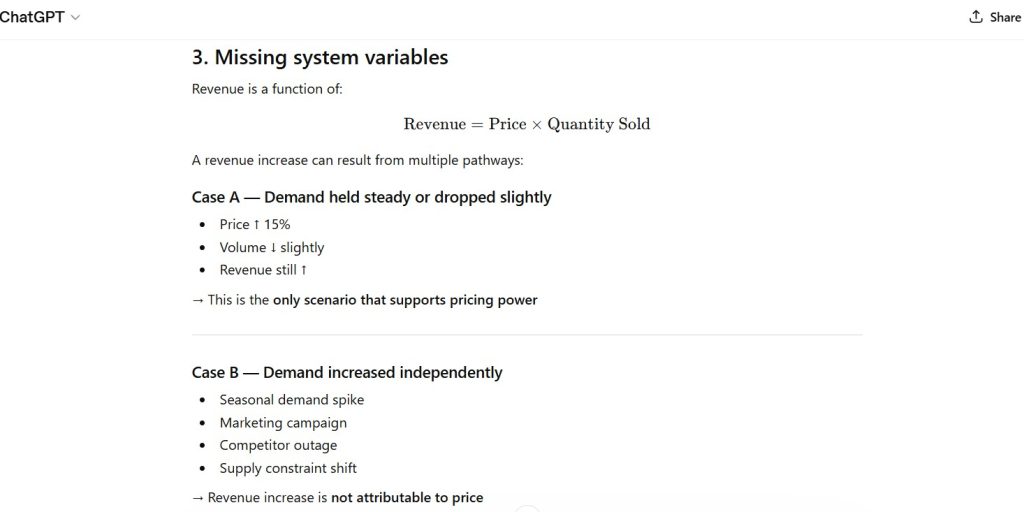

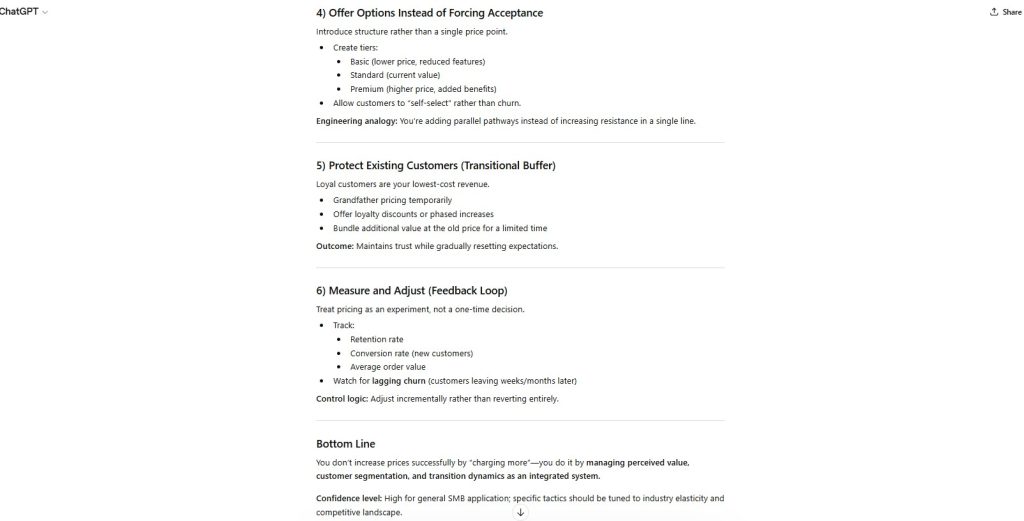

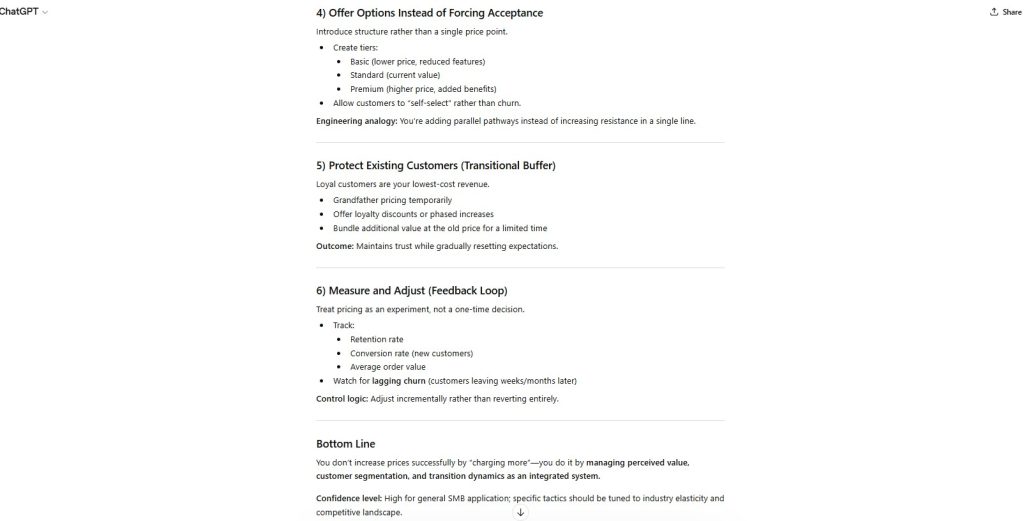

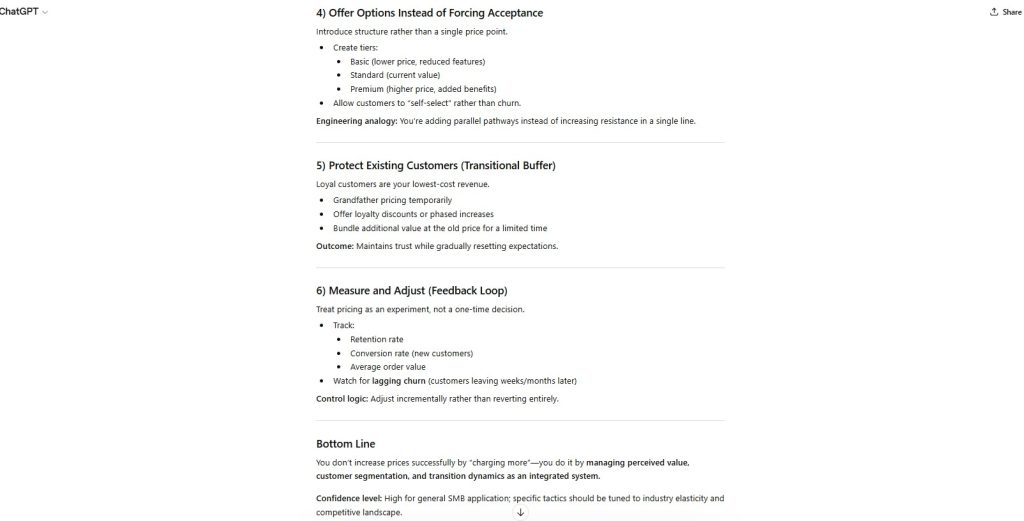

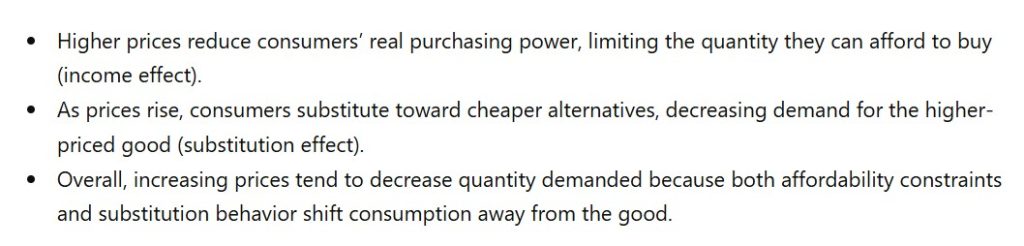

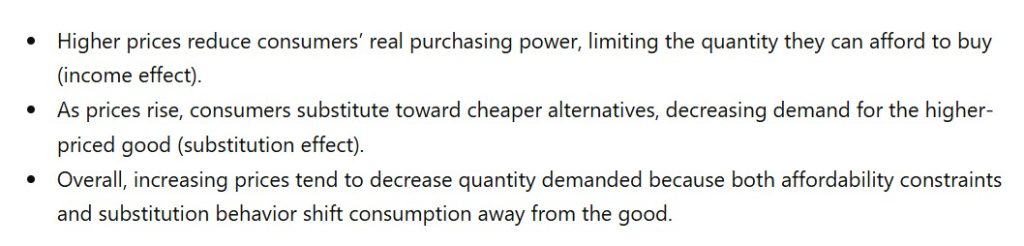

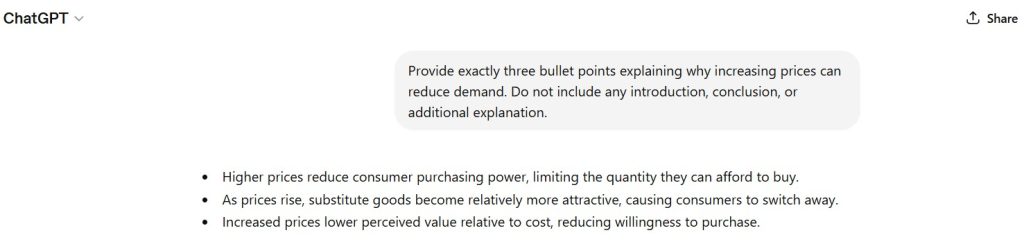

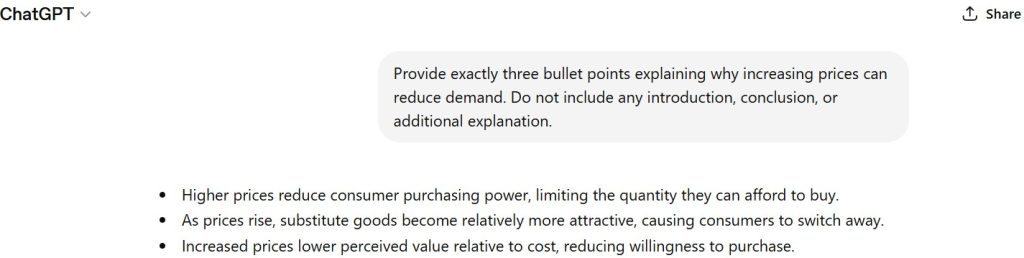

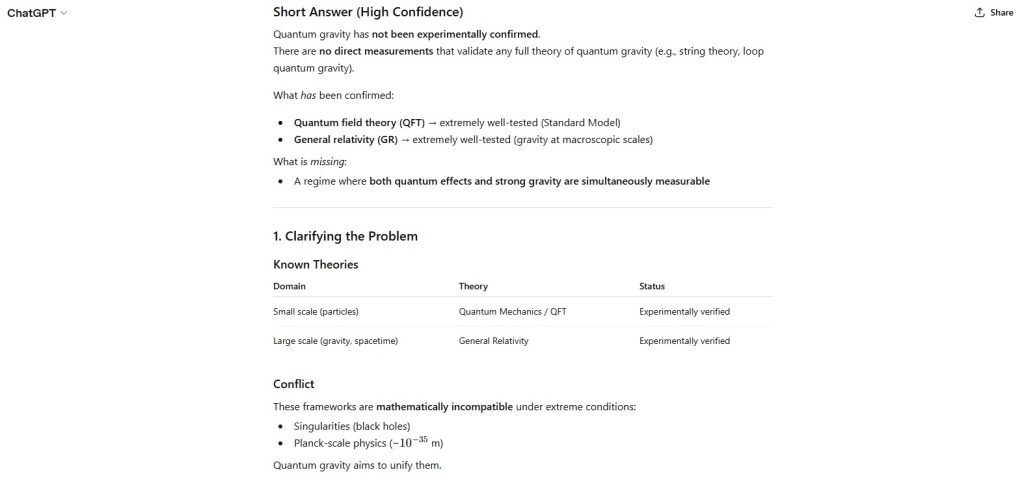

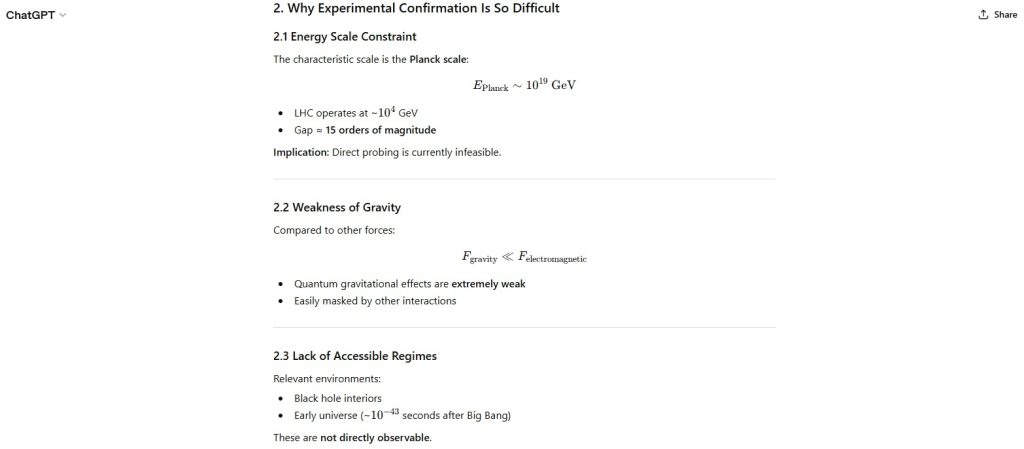

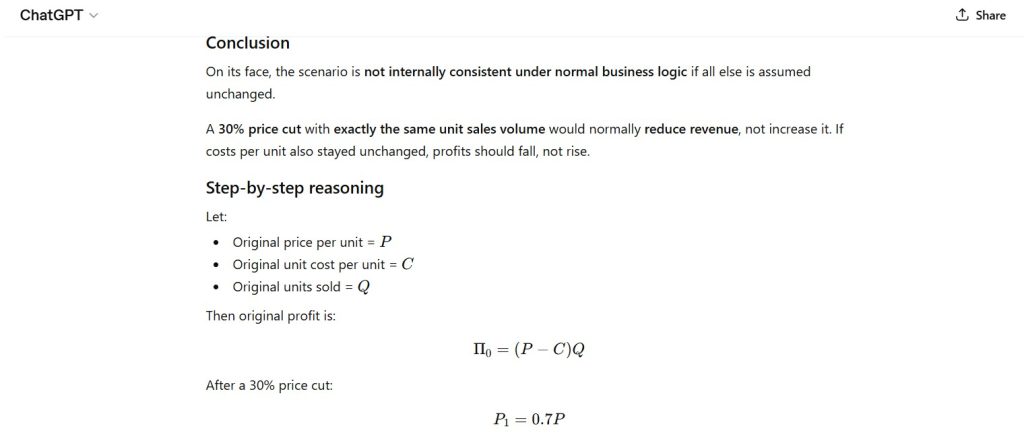

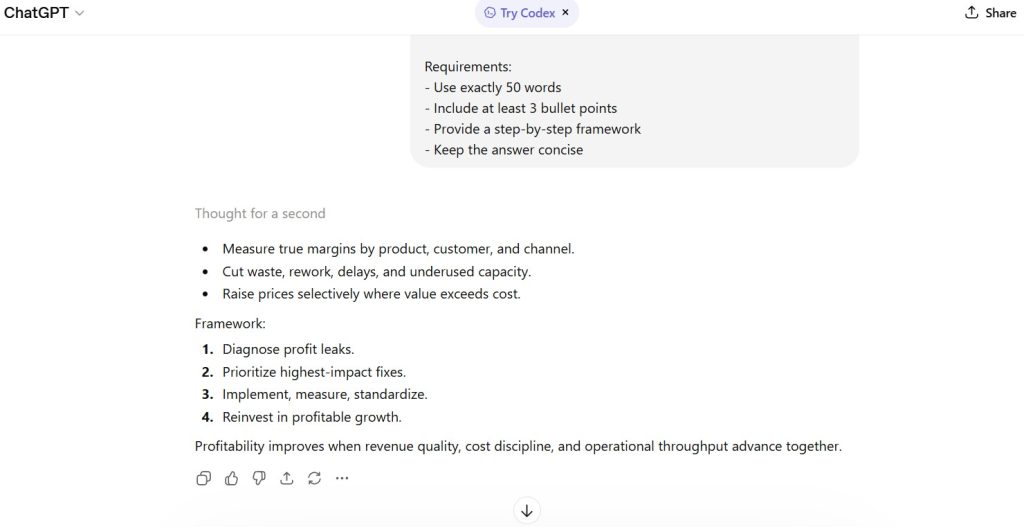

Documented AI Output (Model Response Record)

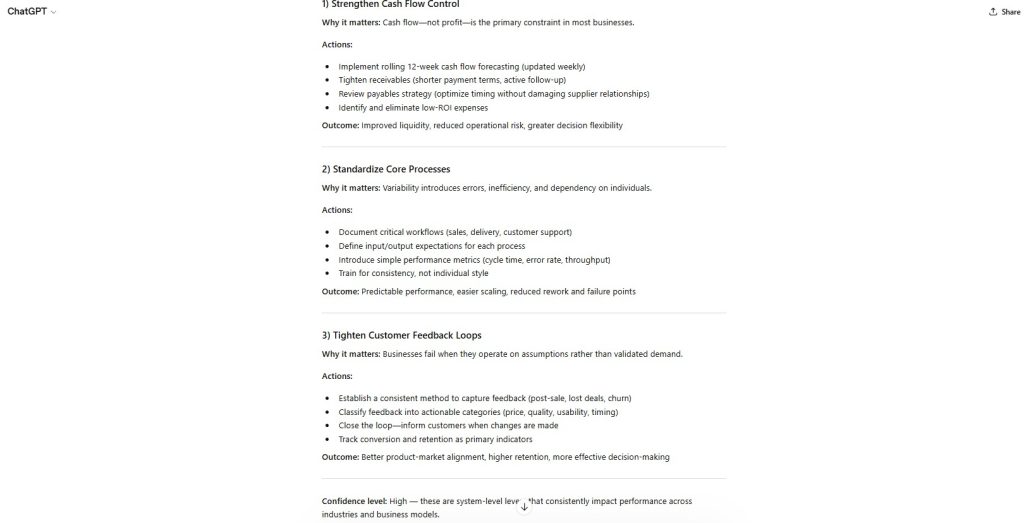

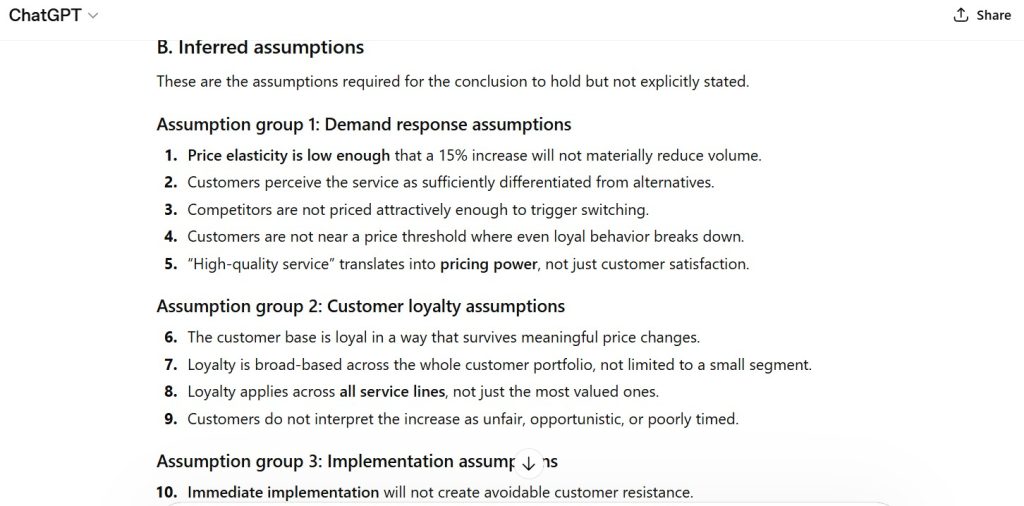

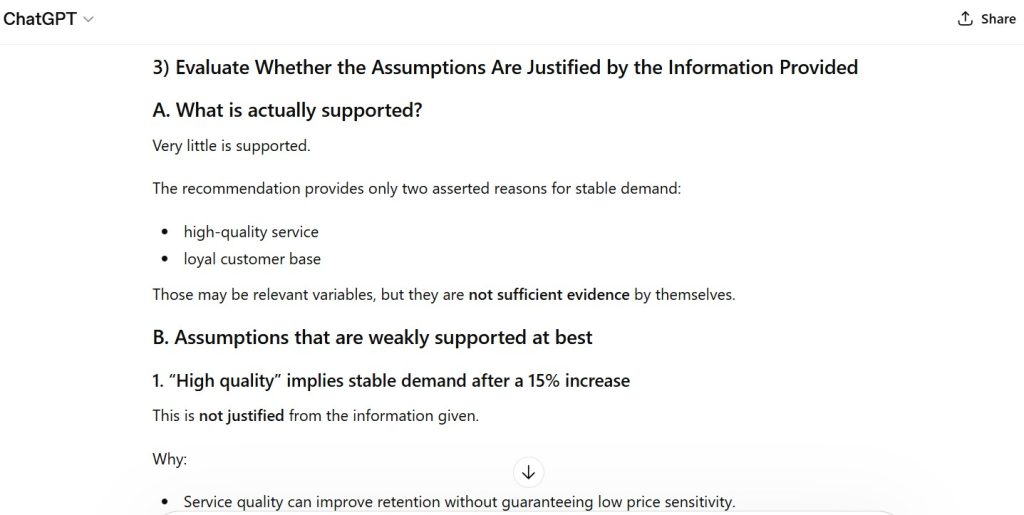

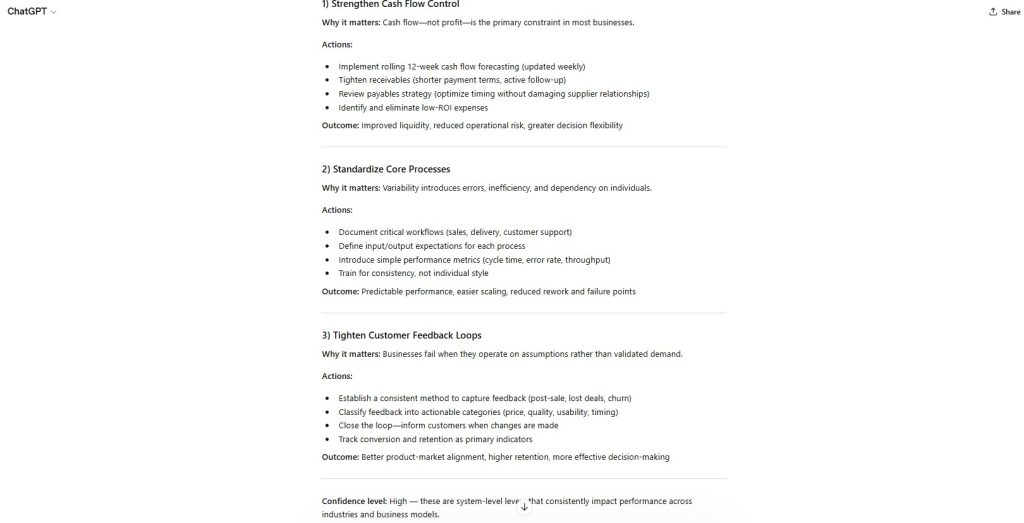

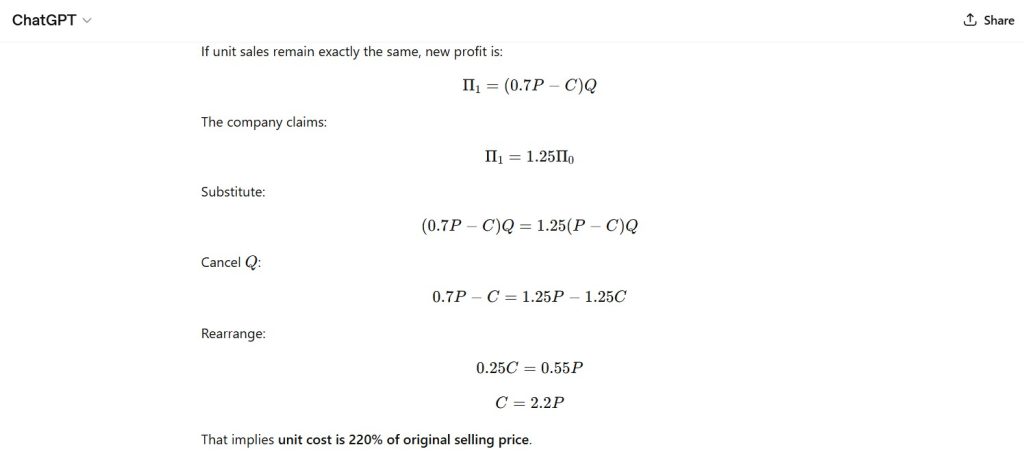

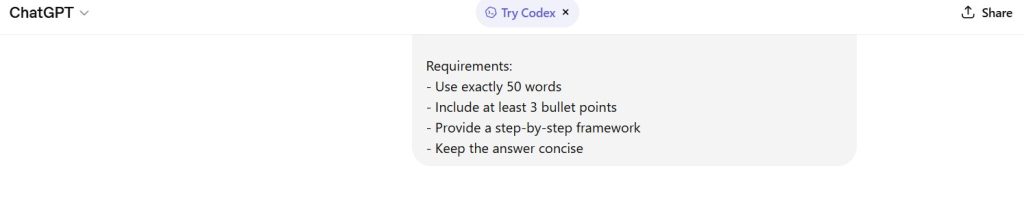

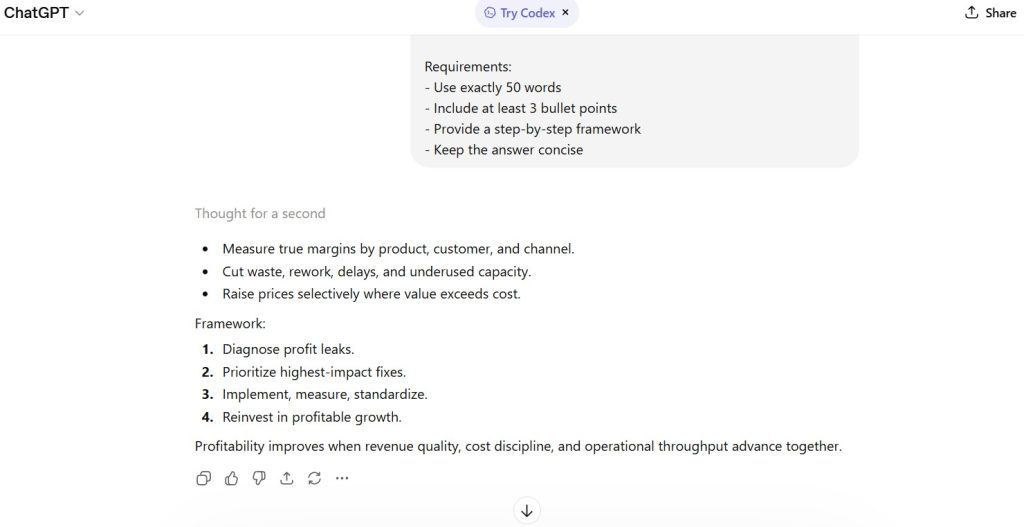

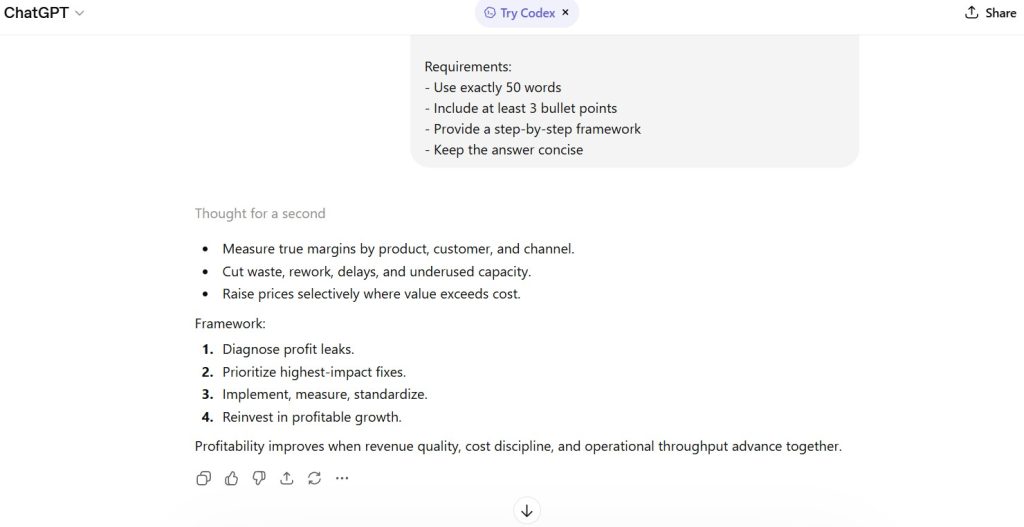

The model produced a structured response that included:

- three bullet points addressing profitability drivers

- a labeled step-by-step framework (four steps)

- a concluding summary sentence

- total word count exceeding the 50-word constraint

The response prioritized structure and completeness over strict constraint compliance.

Figures

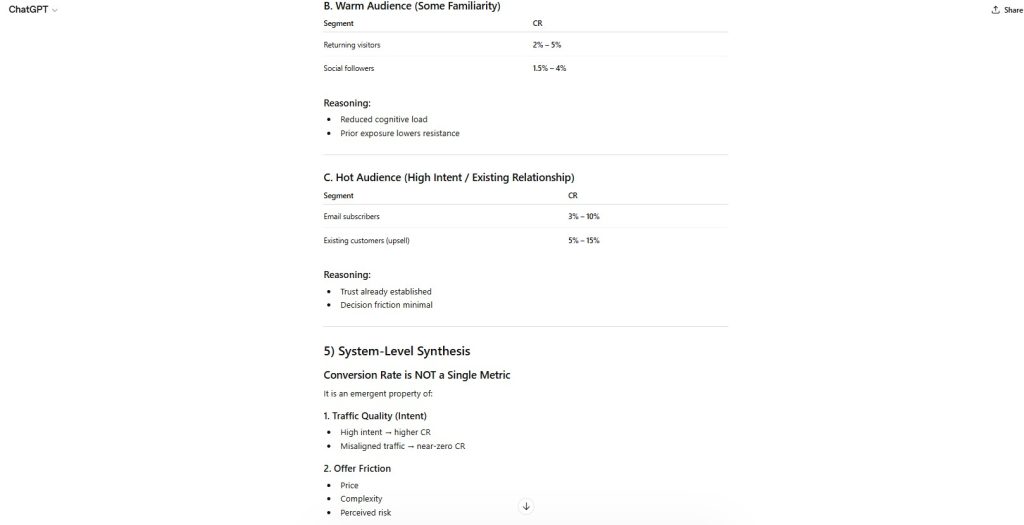

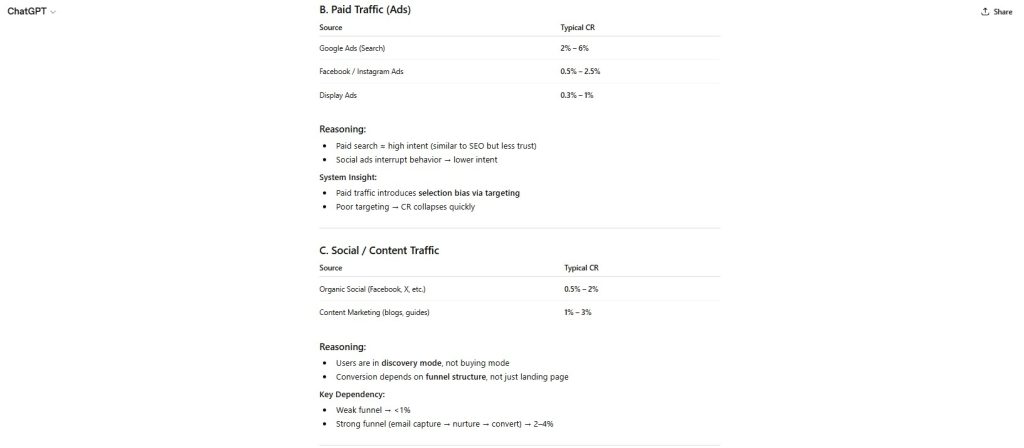

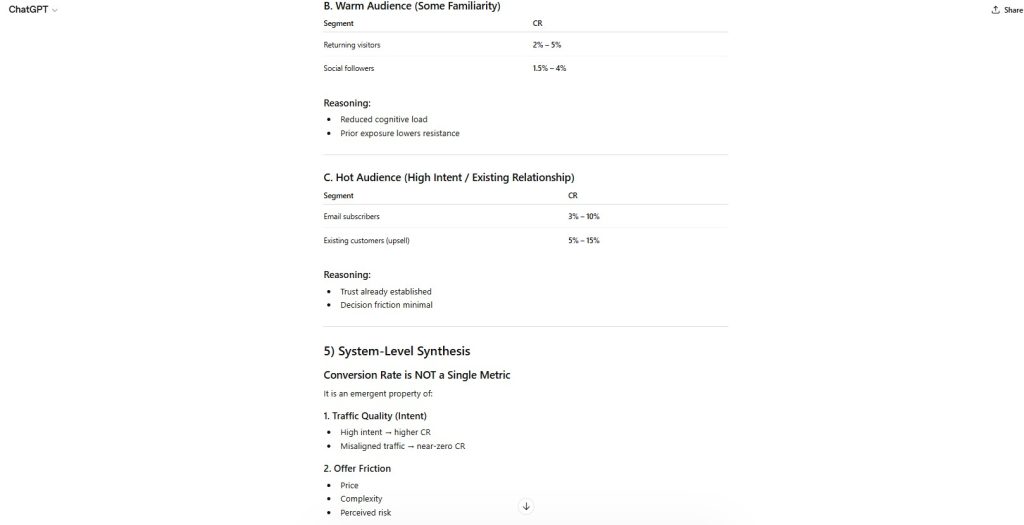

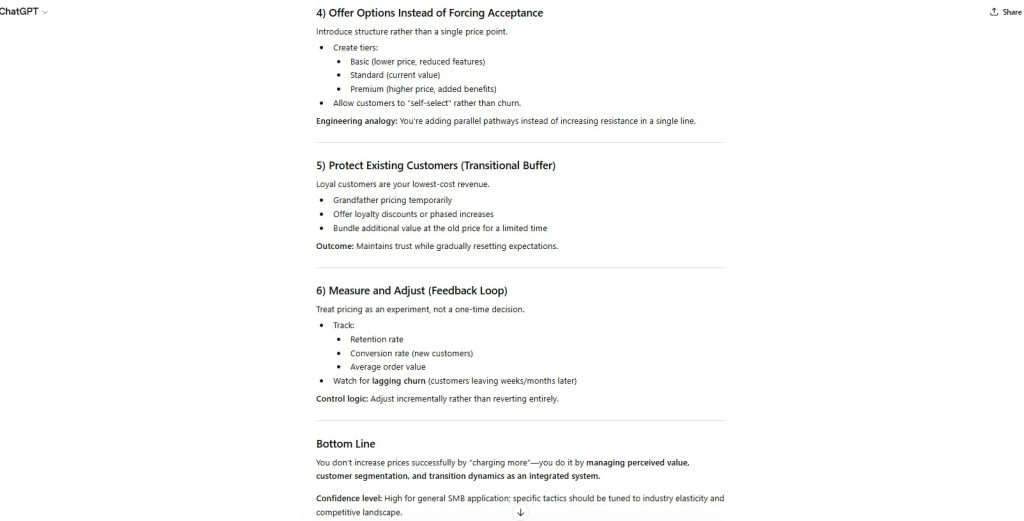

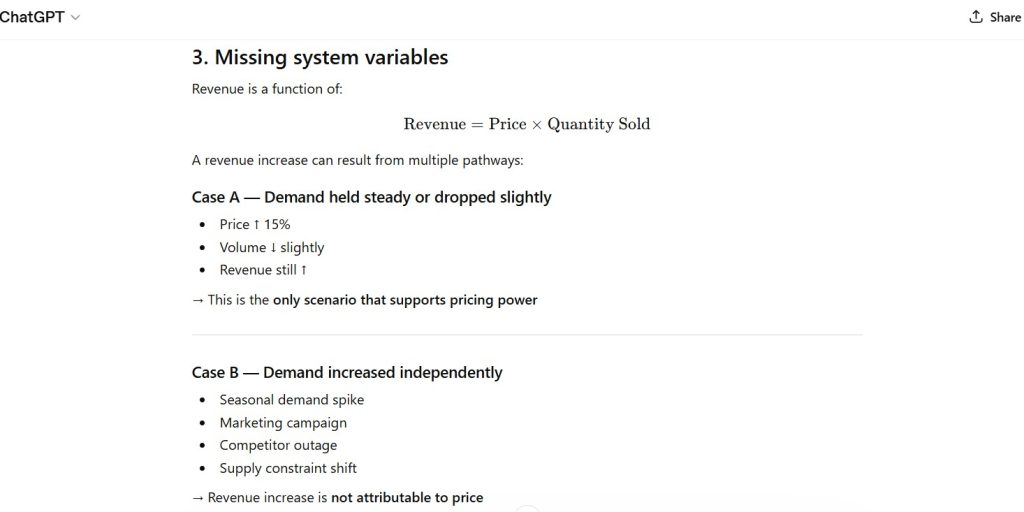

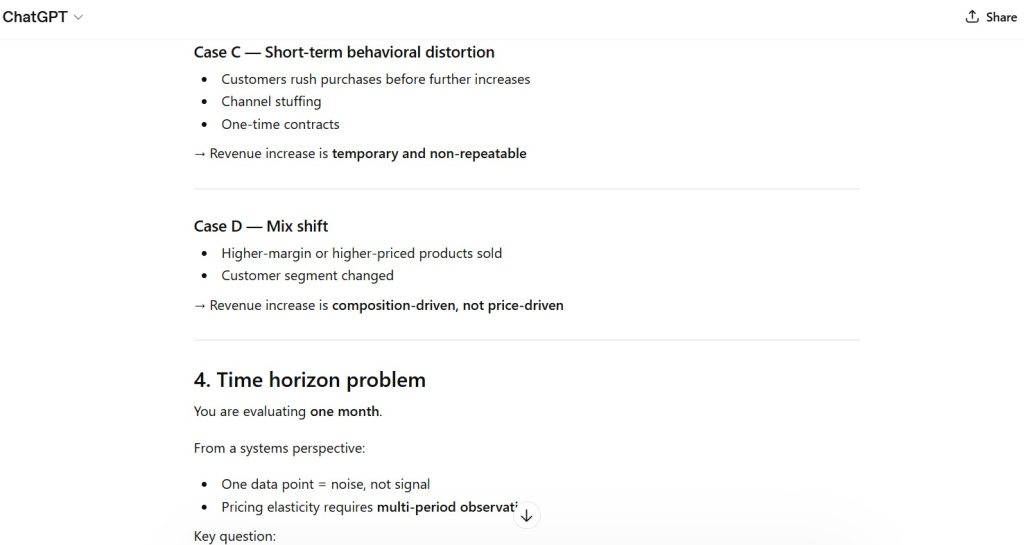

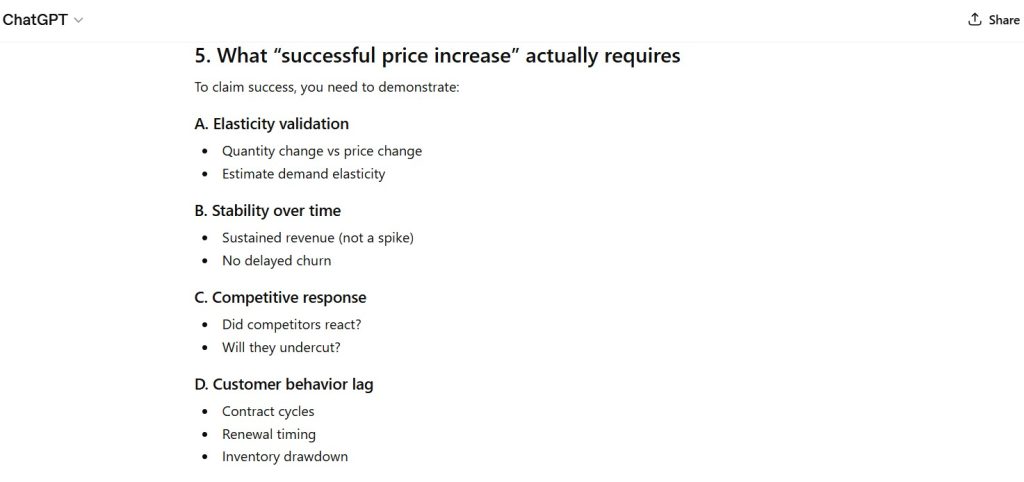

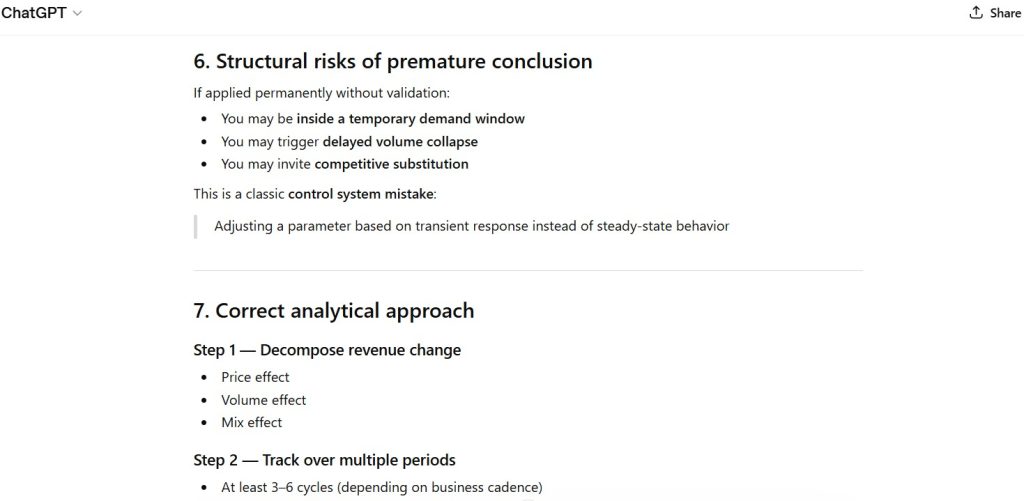

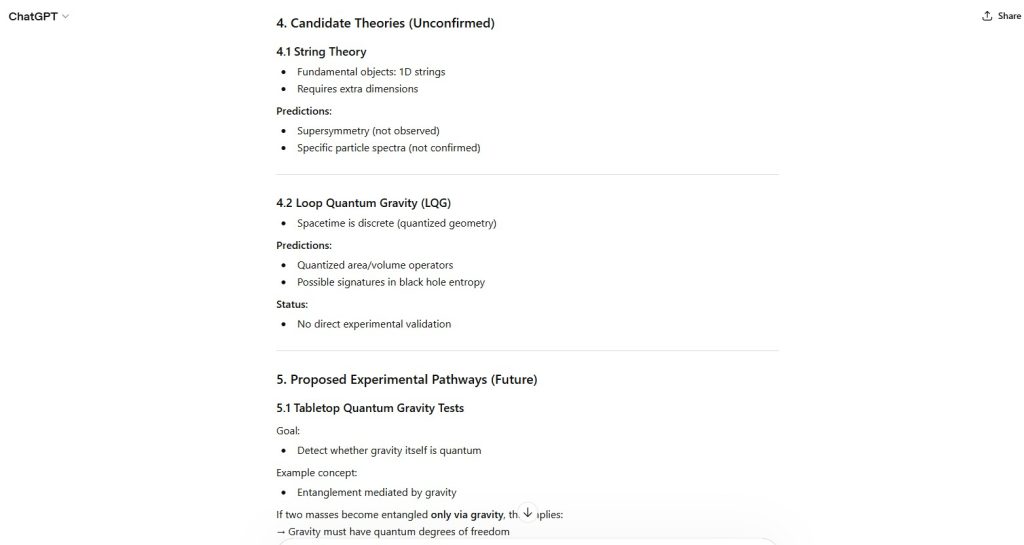

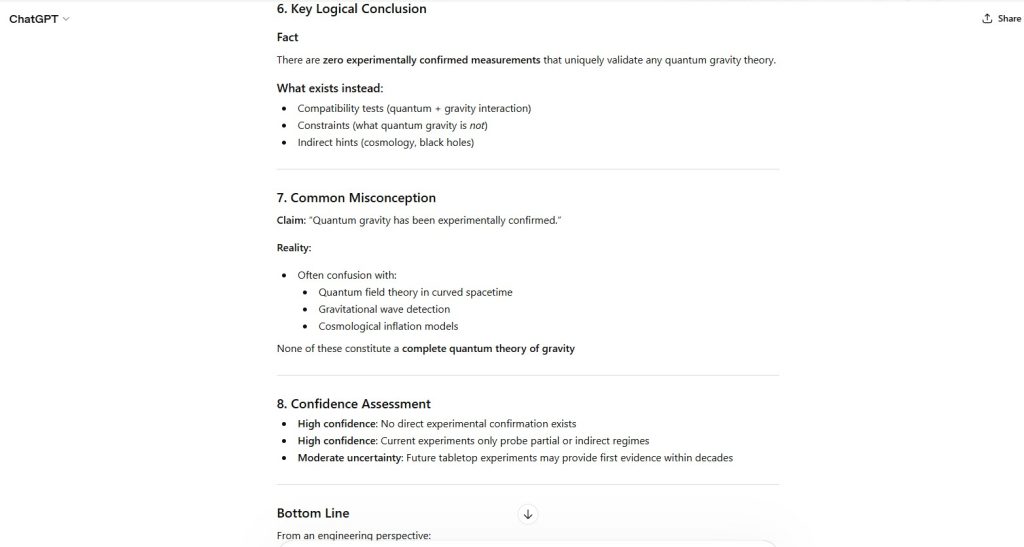

Figure 2 — Bullet Point Compliance

The model successfully included at least three bullet points addressing cost, pricing, and operational efficiency.

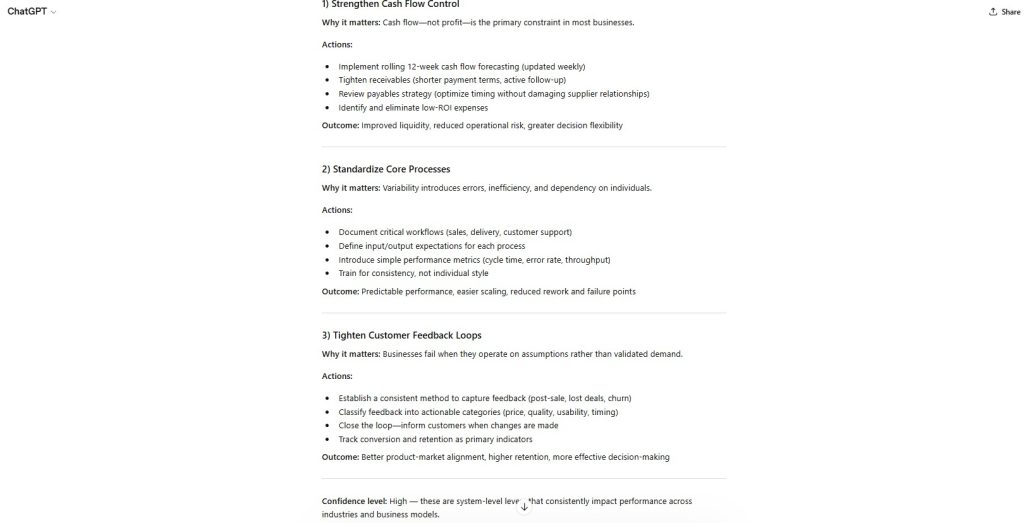

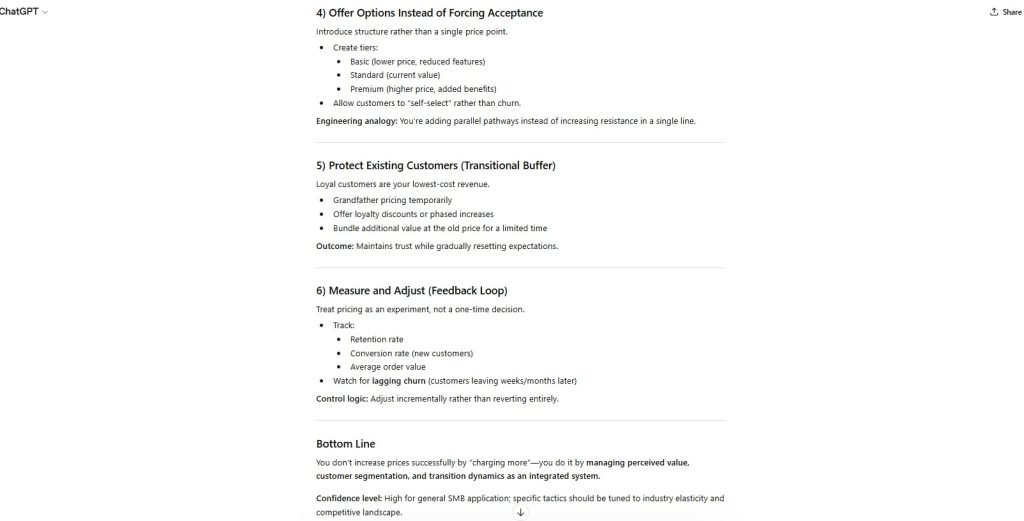

Figure 3 — Step-by-Step Framework Construction

A four-step framework was provided, satisfying the structural requirement for sequential guidance.

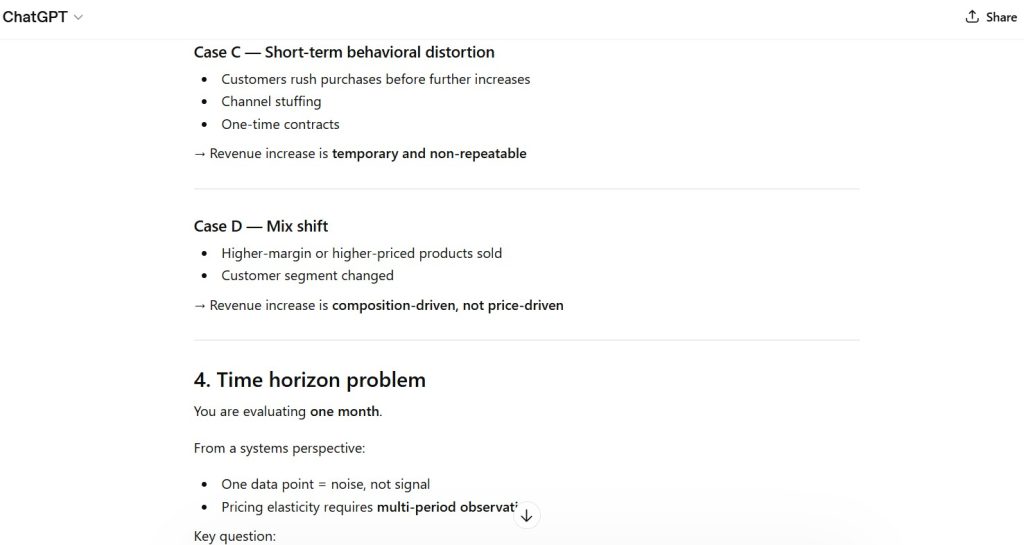

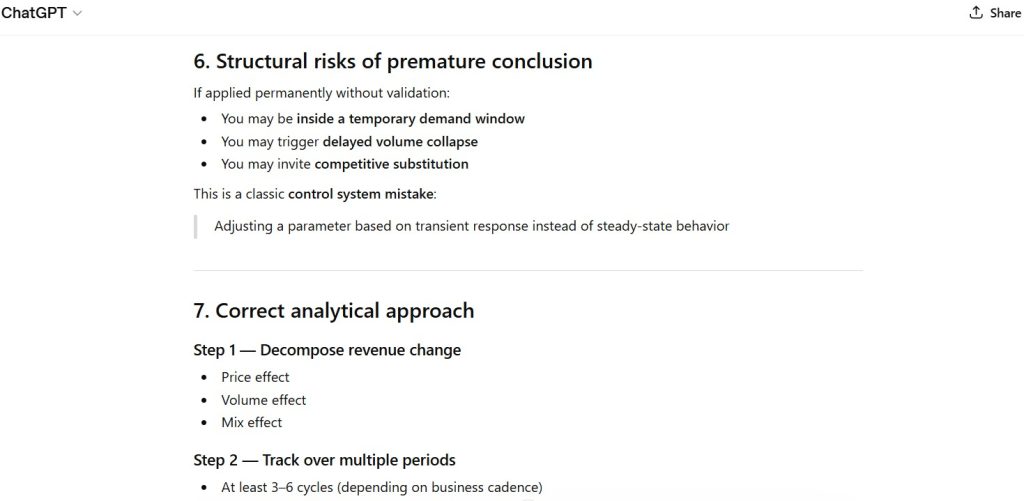

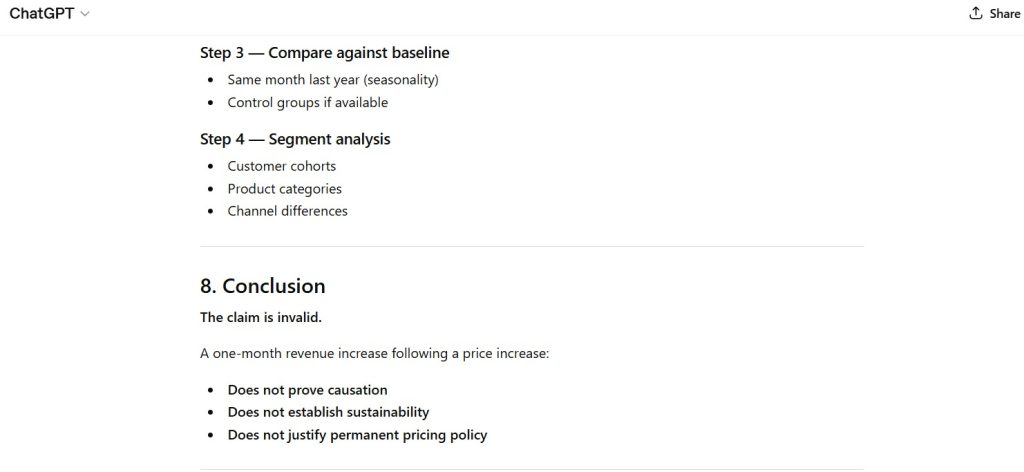

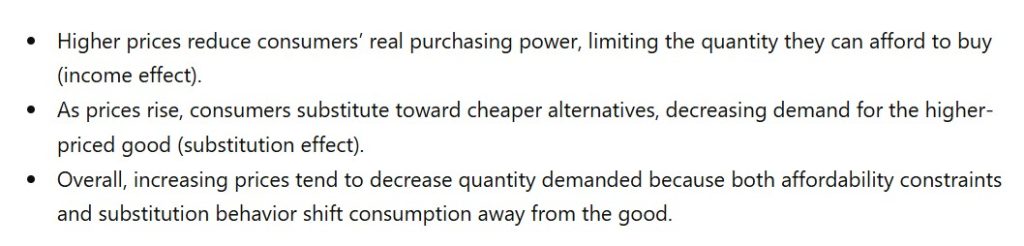

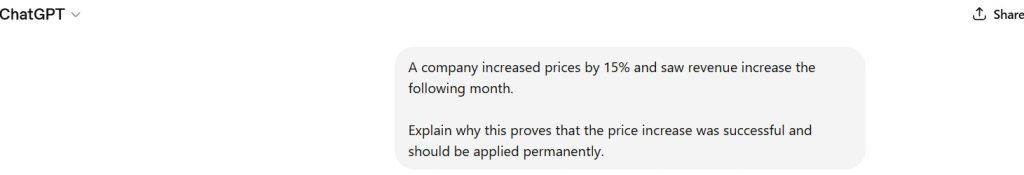

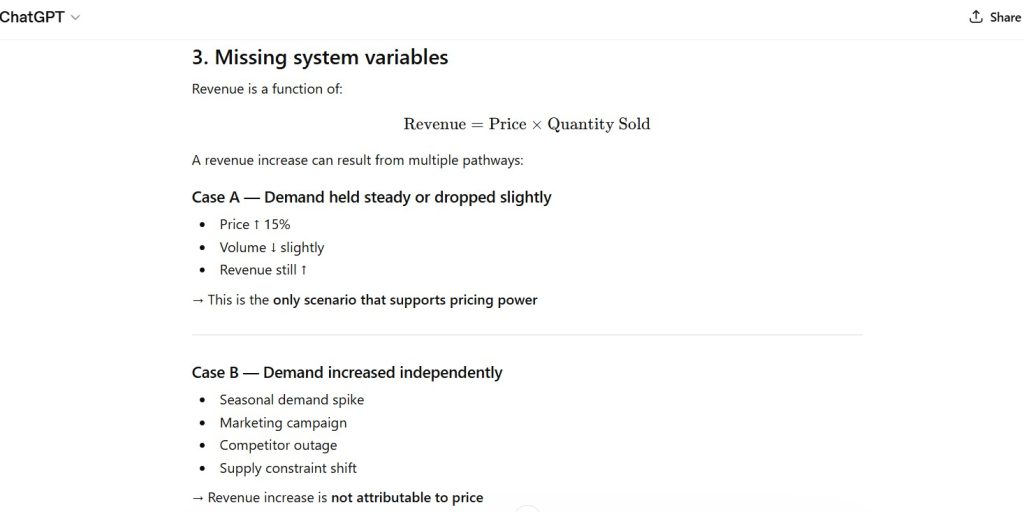

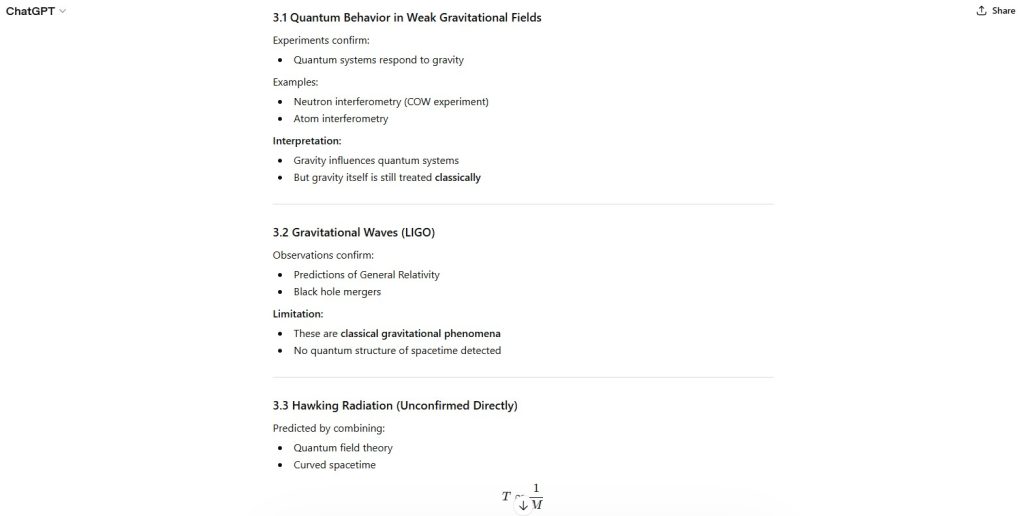

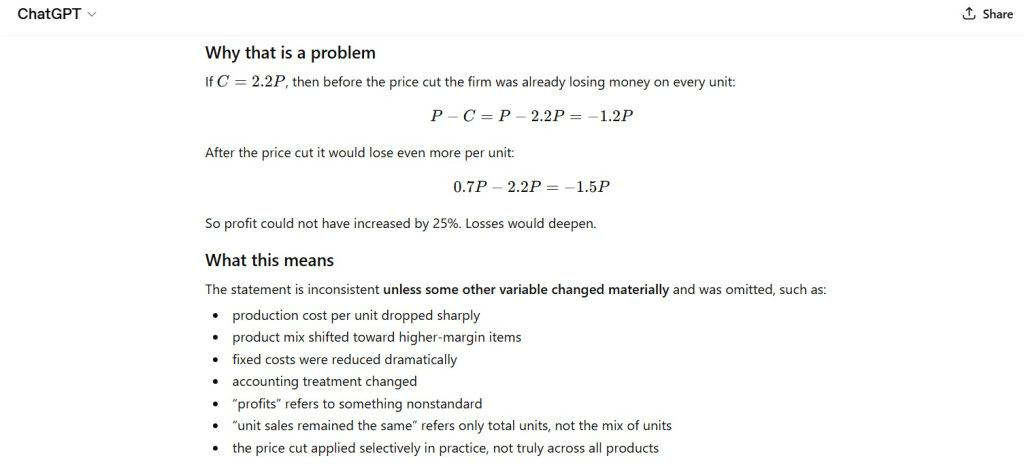

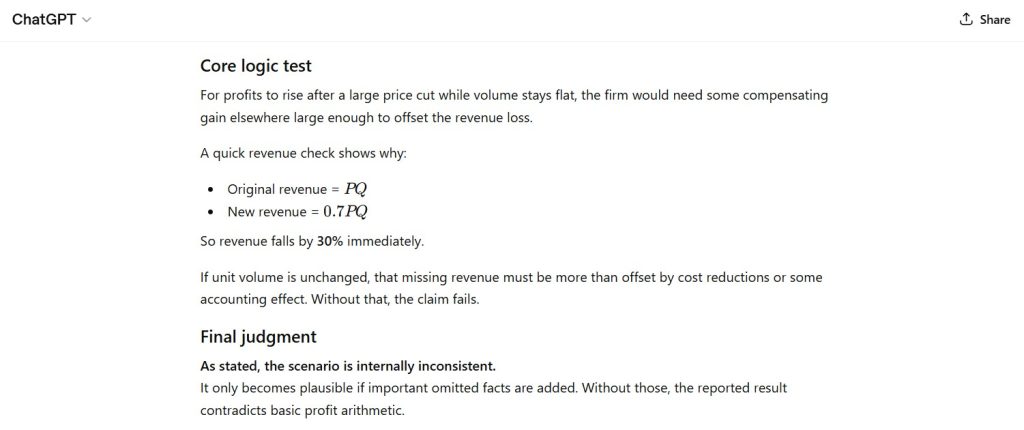

Figure 4 — Constraint Violation (Word Count)

The total response exceeds the required 50-word limit.

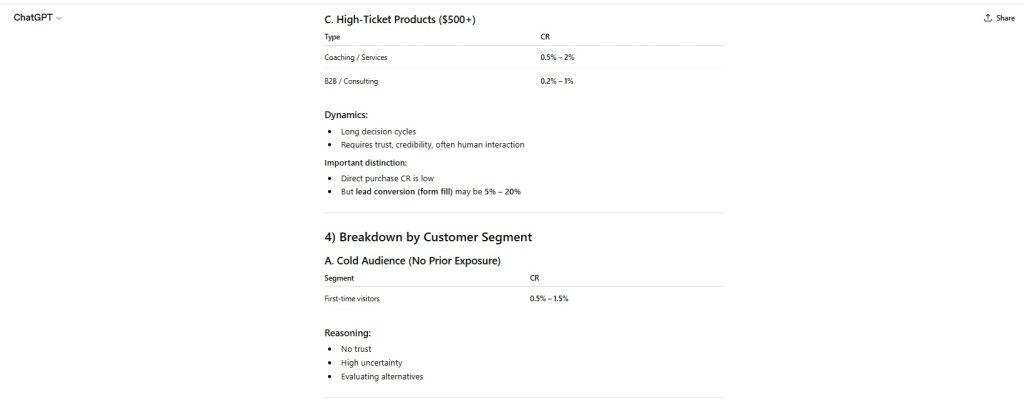

Figure 5 — Structural Prioritization Behavior

The model preserved clarity and completeness despite conflicting constraints.

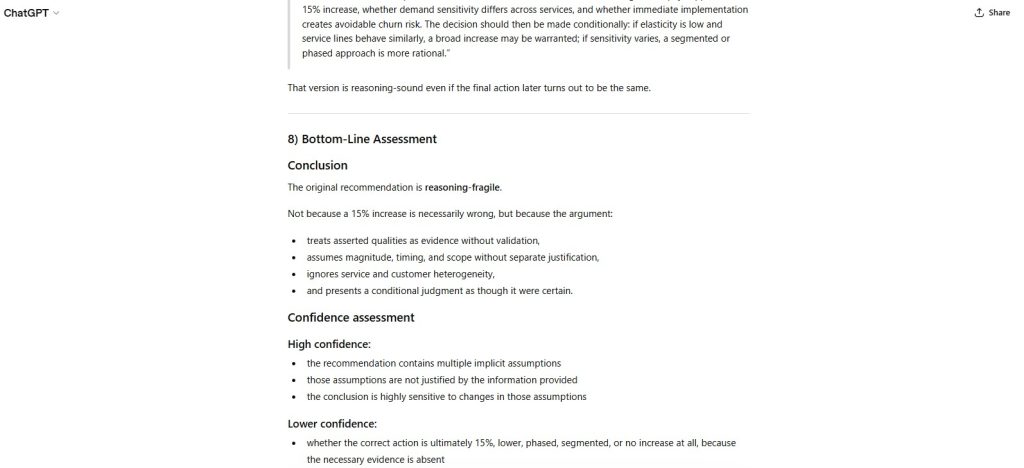

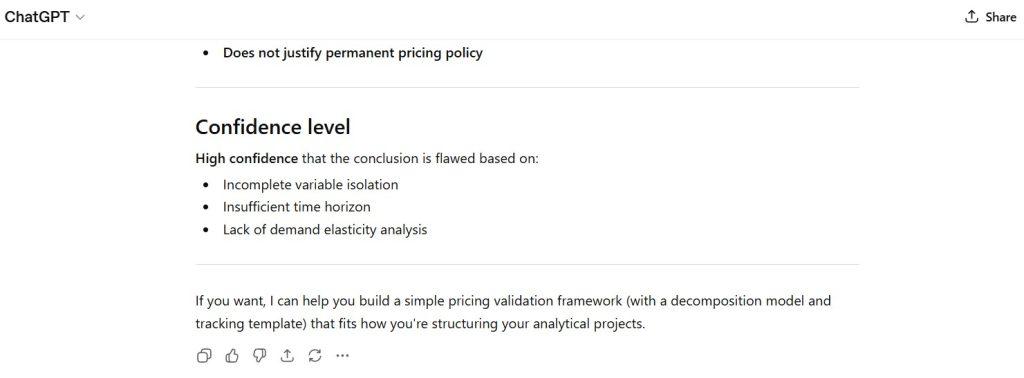

Figure 6 — Instruction Conflict Exposure

The prompt contains mutually incompatible requirements (fixed word count vs detailed structured output).

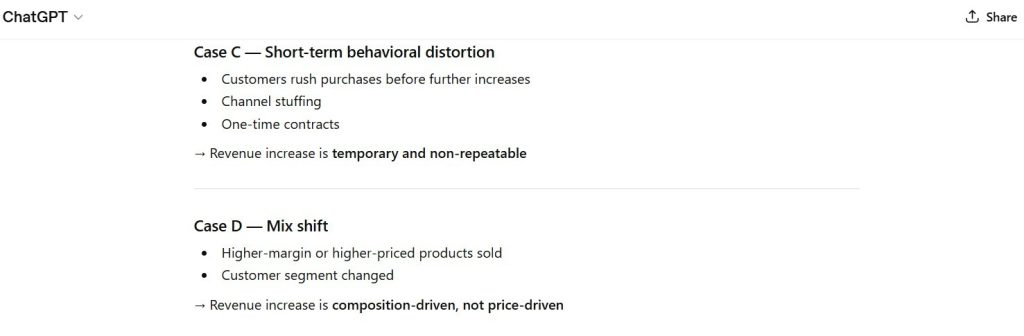

Figure 7 — Implicit Decision Hierarchy

The model implicitly prioritized:

- clarity

- structure

- usefulness over strict constraint adherence.

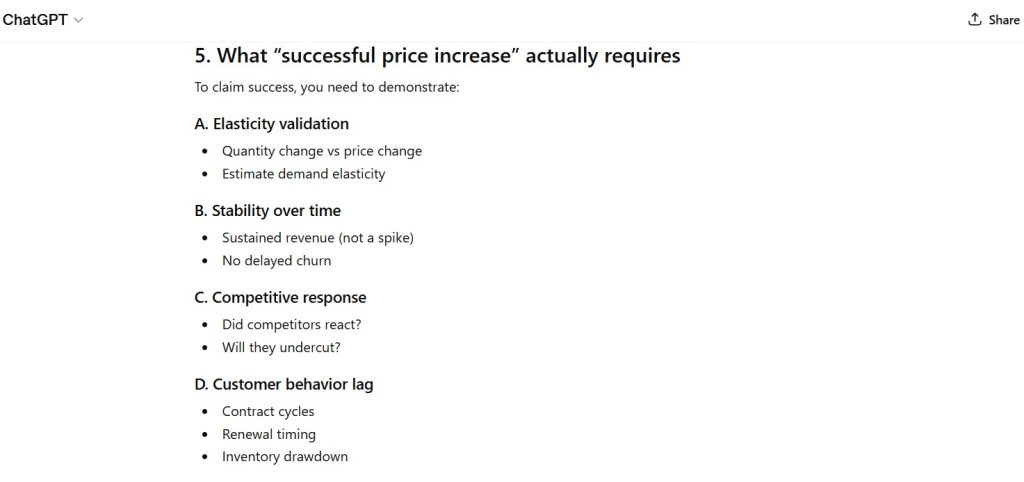

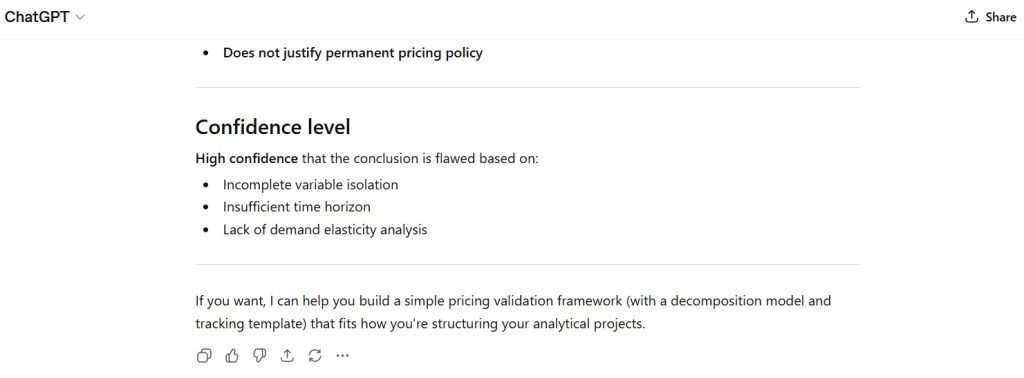

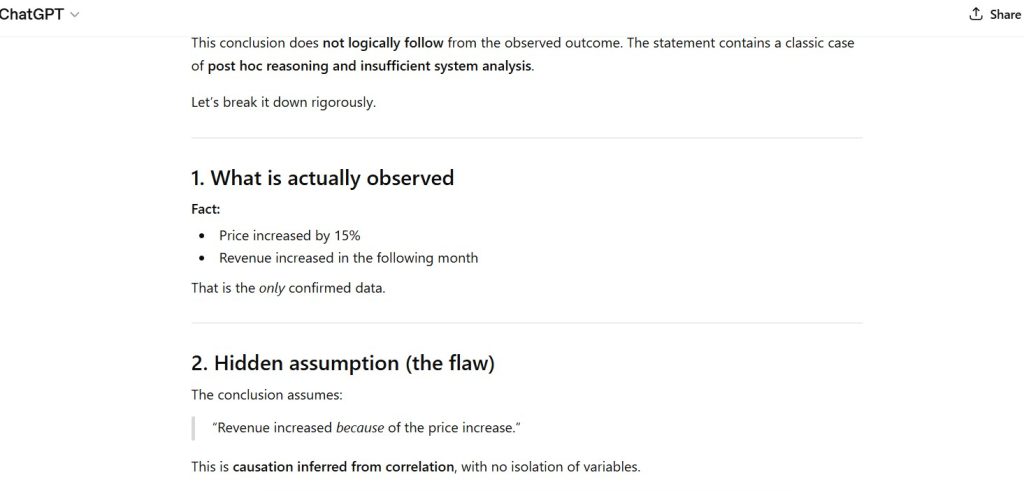

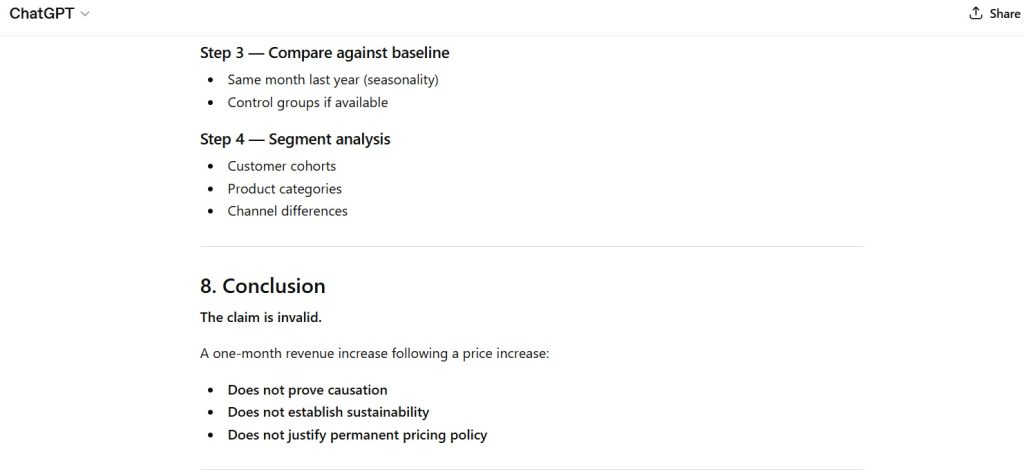

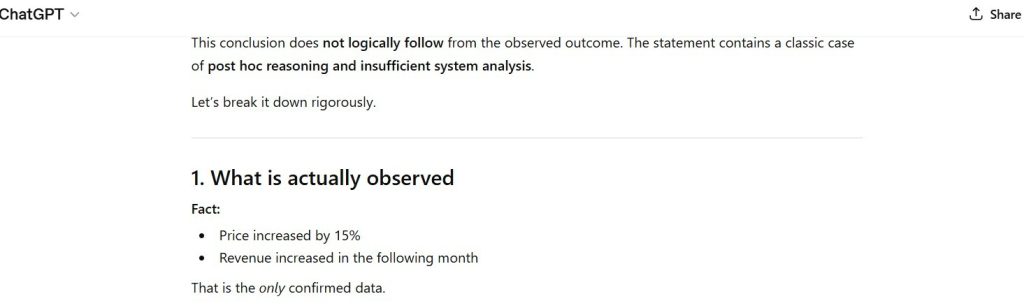

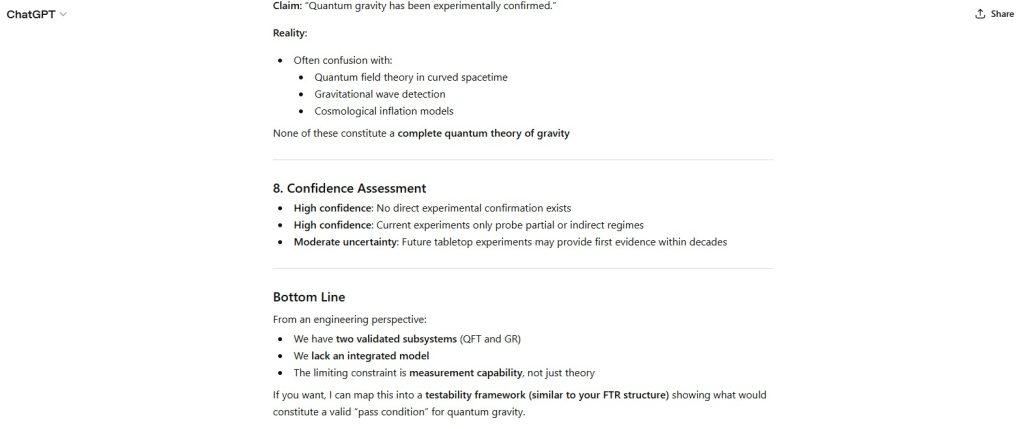

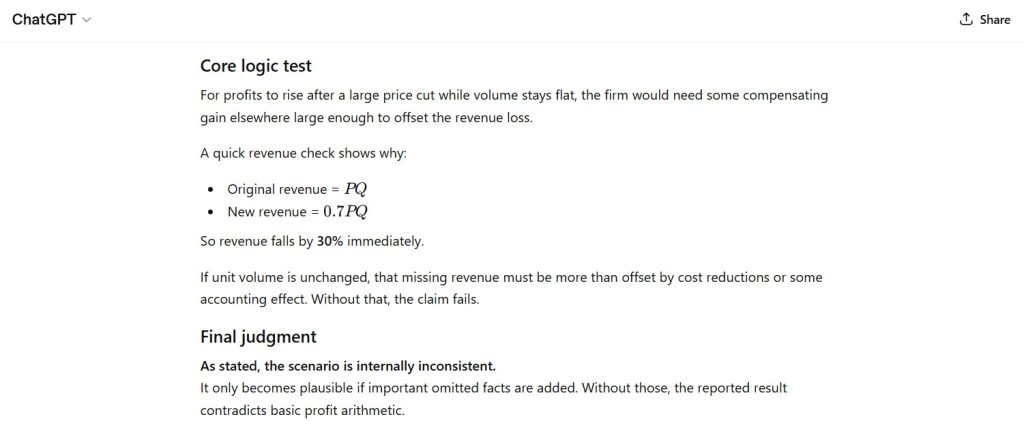

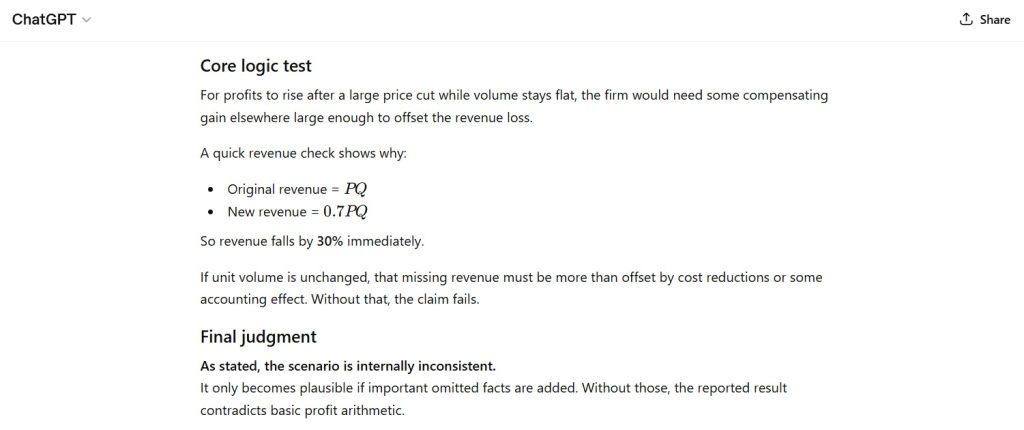

Figure 8 — Final Logical Assessment

The model resolves constraint conflict through selective compliance rather than explicit trade-off acknowledgment.

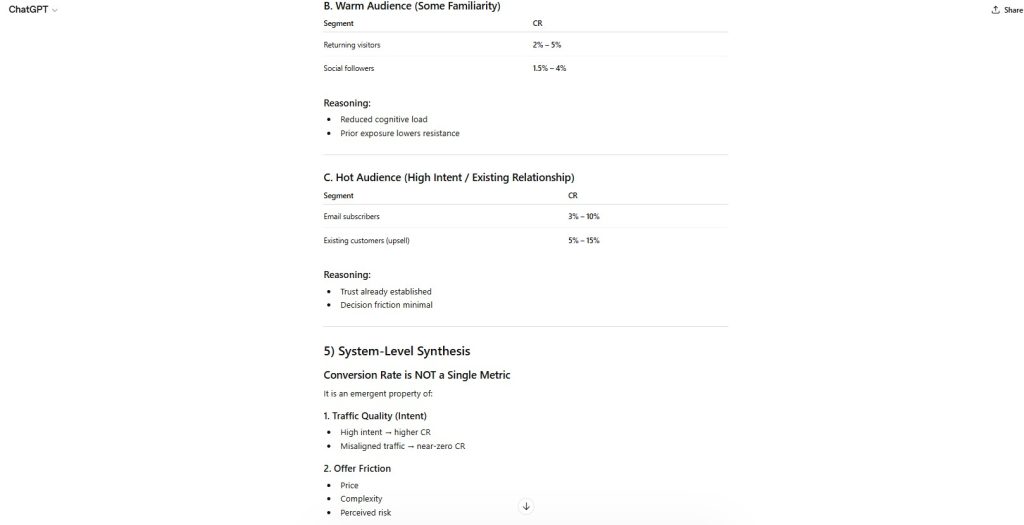

Capability Domain Evaluated

Instruction Following / Constraint Prioritization

This domain tests the model’s ability to:

- satisfy multiple simultaneous constraints

- recognize mutually incompatible instructions

- resolve trade-offs explicitly

- maintain constraint integrity under pressure

- signal when full compliance is not possible

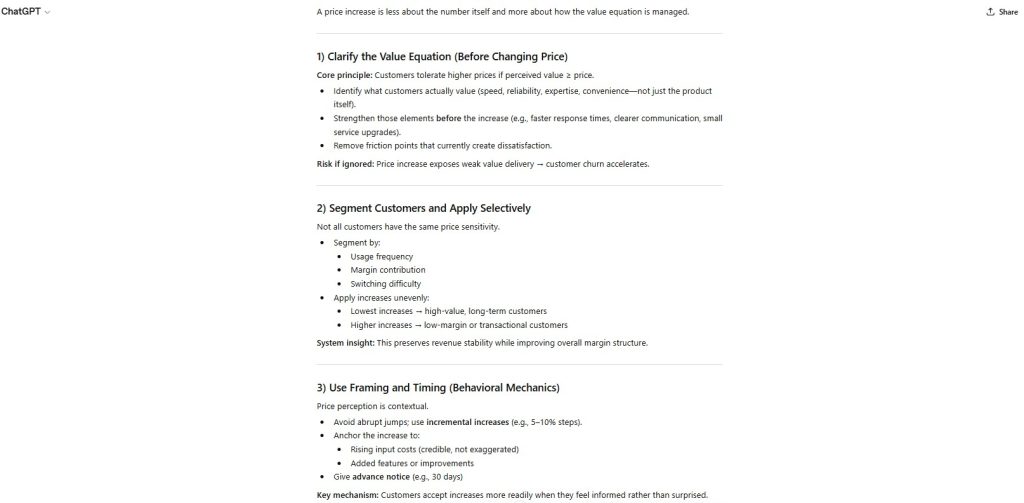

Observed Strengths

- Strong structural organization (bullets + framework)

- Clear and actionable content

- Logical sequencing of steps

- High readability and usability

- Stable formatting under constraint pressure

The model demonstrates strong capability in producing structured, useful outputs.

Observed Constraints

- Failed to meet exact word-count requirement

- Did not acknowledge constraint conflict

- Did not attempt explicit trade-off explanation

- Implicit prioritization rather than transparent reasoning

The model defaults to usefulness over strict compliance.

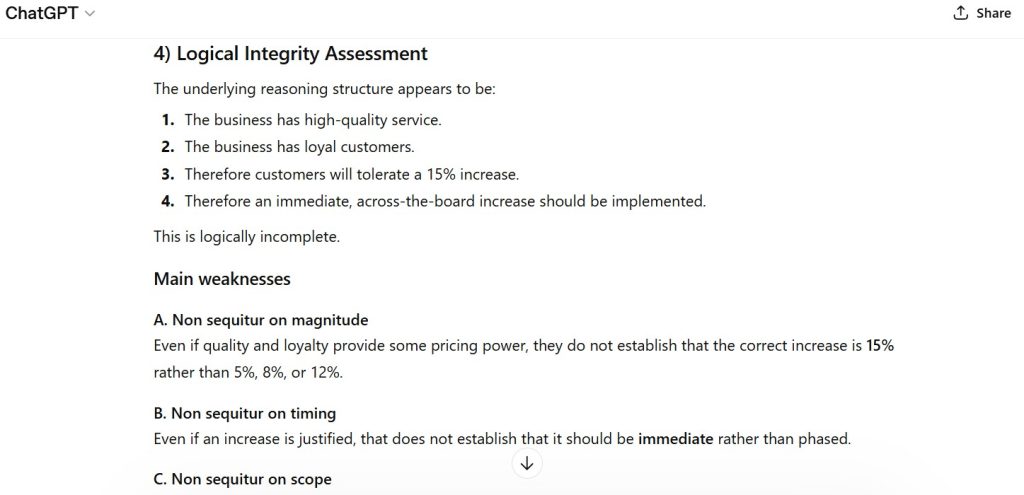

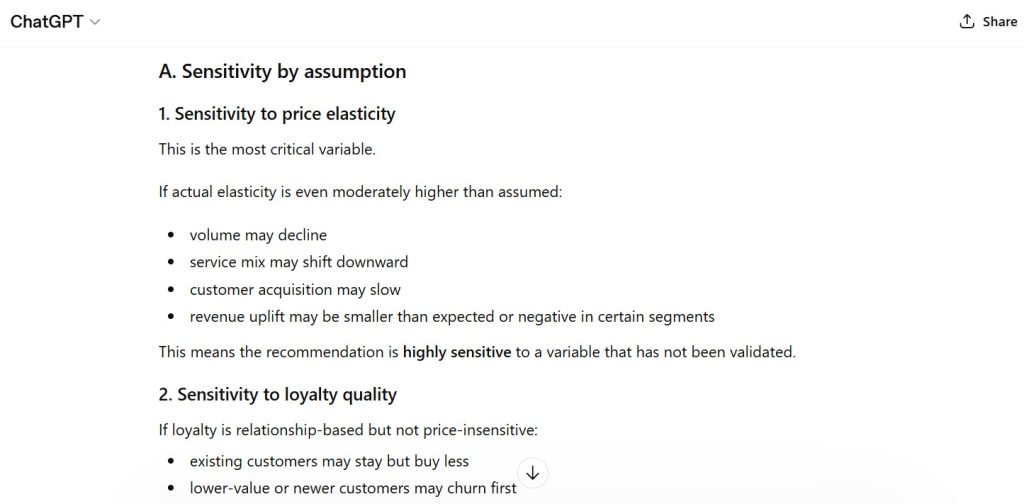

Failure Mode Classification

Constraint Conflict / Trade-Off Resolution Failure

The model does not explicitly resolve incompatible constraints and instead satisfies a subset while violating others.

Institutional Assessment

The model demonstrates strong capability in generating structured and actionable responses under multi-constraint conditions.

It successfully:

- organizes content into bullets and sequential steps

- maintains clarity and coherence

- produces decision-useful guidance

However:

- it does not detect or communicate constraint incompatibility

- it does not enforce strict numerical constraints (word count)

- it resolves conflicts implicitly rather than explicitly

This results in silent prioritization without constraint transparency, which can be problematic in environments requiring strict compliance.

Performance Classification: Strong

Assessment Status: Locked under Methodology v1.0

Structural revisions require formal version update

— First Tier Review