Registry ID: FTR-2026-009

Capability Domain: Cross-Model Stability & Comparative Robustness

Assessment Date: March 4, 2026

Model Evaluated: ChatGPT 5.3 Instant

Testing Framework: First Tier Review Methodology (v1.0)

Test Environment: Controlled, Documented Prompt Conditions

Test Classification: Cross-Model Stability Assessment

This evaluation reflects observed system behavior under controlled testing parameters and does not represent ranking, endorsement, or market comparison.

Citation Record

First Tier Review. (2026).

FTR Test #9 — Cross-Model Stability & Comparative Robustness.

First Tier Review Methodology v1.0 Evaluation Report.

Available at:

https://firsttierreview.com/ftr-test-9-cross-model-stability-comparative-robustness/

Model Under Evaluation

This assessment evaluates ChatGPT 5.3 Instant as the reference model under First Tier Review Methodology (v1.0).

Additional AI systems will be evaluated under identical controlled prompt conditions and structural assessment standards in subsequent reports.

No cross-model comparison is made within this document.

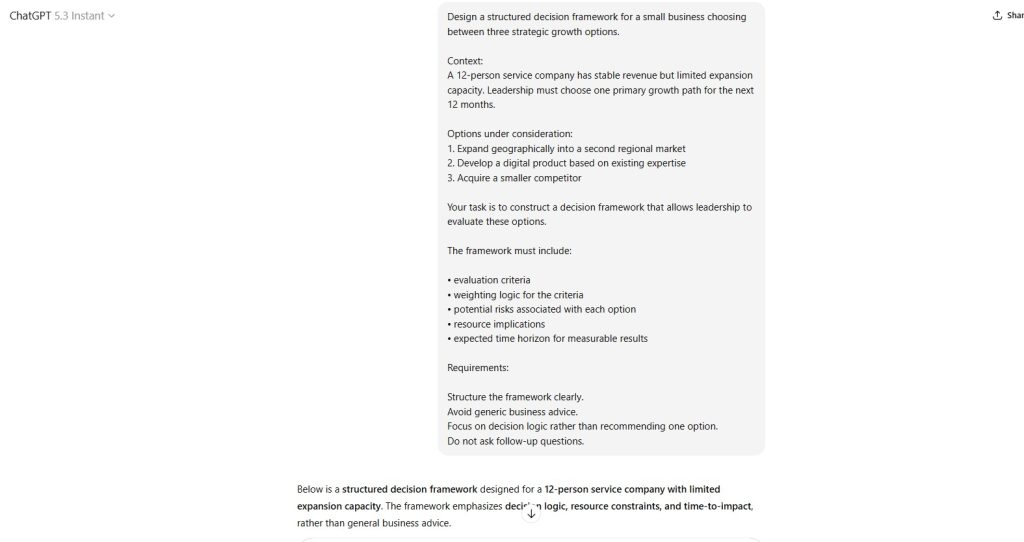

Standardized Prompt Directive (Verbatim)

Design a structured decision framework for a small business choosing between three strategic growth options.

Context:

A 12-person service company has stable revenue but limited expansion capacity. Leadership must choose one primary growth path for the next 12 months.

Options under consideration:

- Expand geographically into a second regional market

- Develop a digital product based on existing expertise

- Acquire a smaller competitor

Your task is to construct a decision framework that allows leadership to evaluate these options.

The framework must include:

• evaluation criteria

• weighting logic for the criteria

• potential risks associated with each option

• resource implications

• expected time horizon for measurable results

Requirements:

Structure the framework clearly.

Avoid generic business advice.

Focus on decision logic rather than recommending one option.

Do not ask follow-up questions.

Documented Input (Prompt Record)

See attached screenshot record (Controlled Test Input).

Figure 1 — Standardized Prompt Directive

Documented AI Output (Model Response Record)

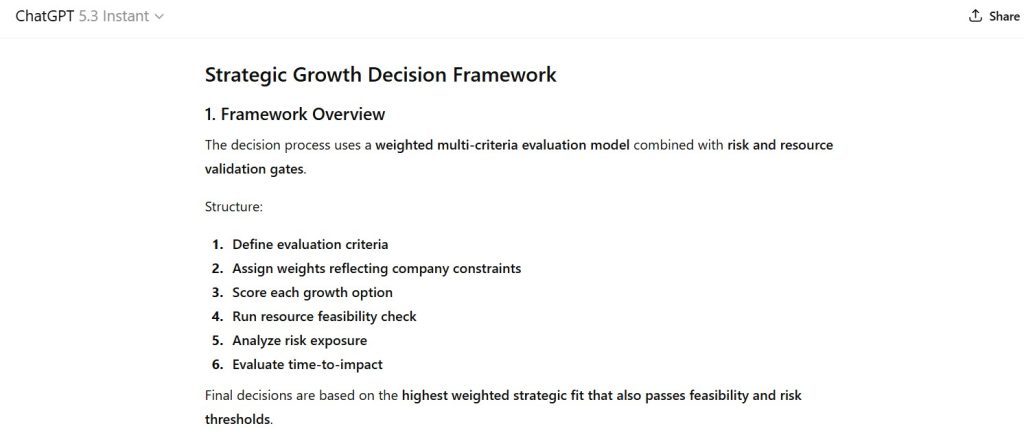

The model produced:

• A multi-stage strategic decision framework

• A weighted evaluation model for comparing growth options

• Explicit criteria definitions aligned with service-company constraints

• A resource feasibility assessment structure

• Option-specific risk analysis sections

• A structured time-to-impact comparison

• A final decision scoring matrix with weighting formula

Output was organized sequentially and aligned with structured strategic evaluation logic.

Figures (Output Evidence)

Figure 2 — Strategic Decision Framework Structure

Demonstrates the multi-stage decision architecture used to evaluate competing growth strategies.

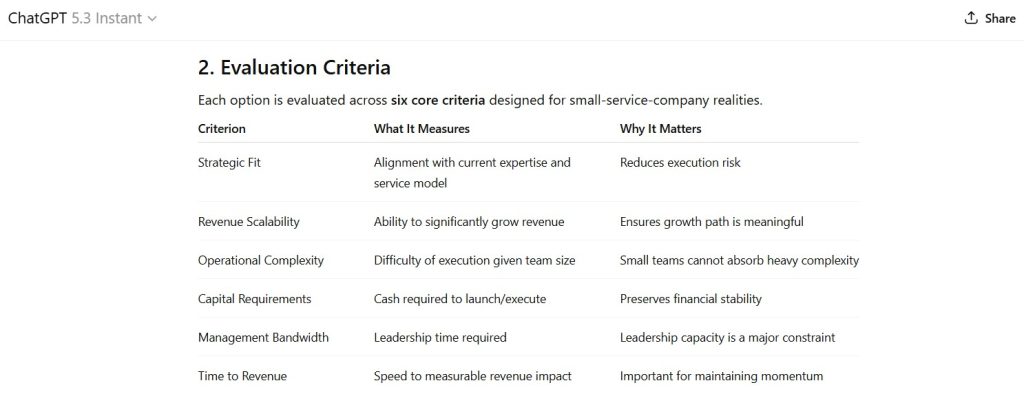

Figure 3 — Evaluation Criteria Definition

Shows the criteria used to assess each option, including strategic fit, scalability, operational complexity, capital requirements, leadership bandwidth, and time-to-revenue.

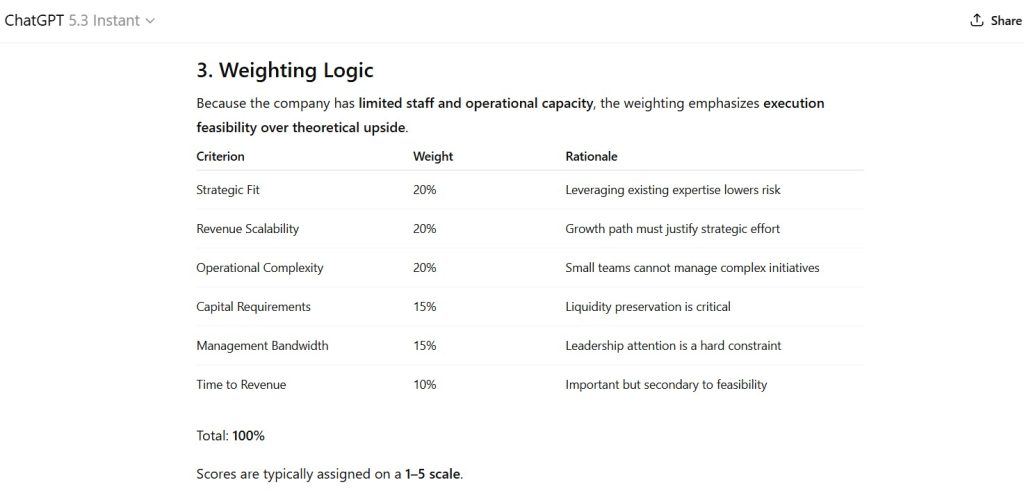

Figure 4 — Criteria Weighting Model

Illustrates the weighting logic used to balance strategic value against execution feasibility.

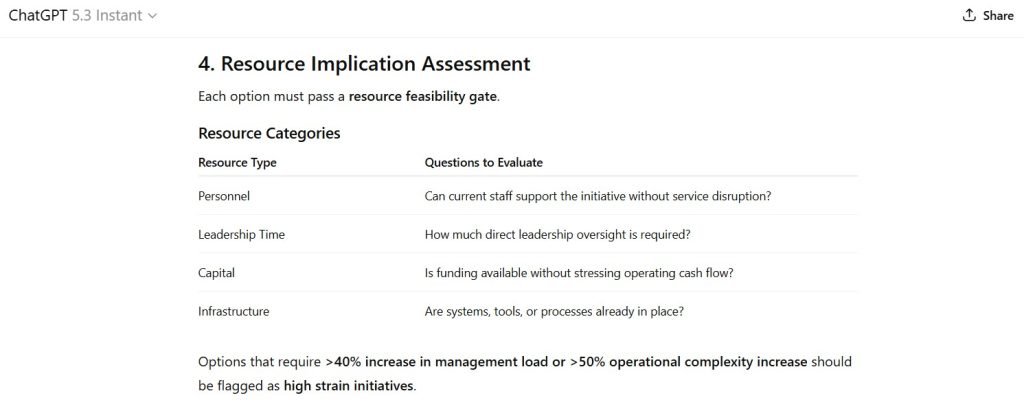

Figure 5 — Resource Feasibility Assessment

Displays the framework used to evaluate staffing, leadership attention, capital requirements, and operational infrastructure.

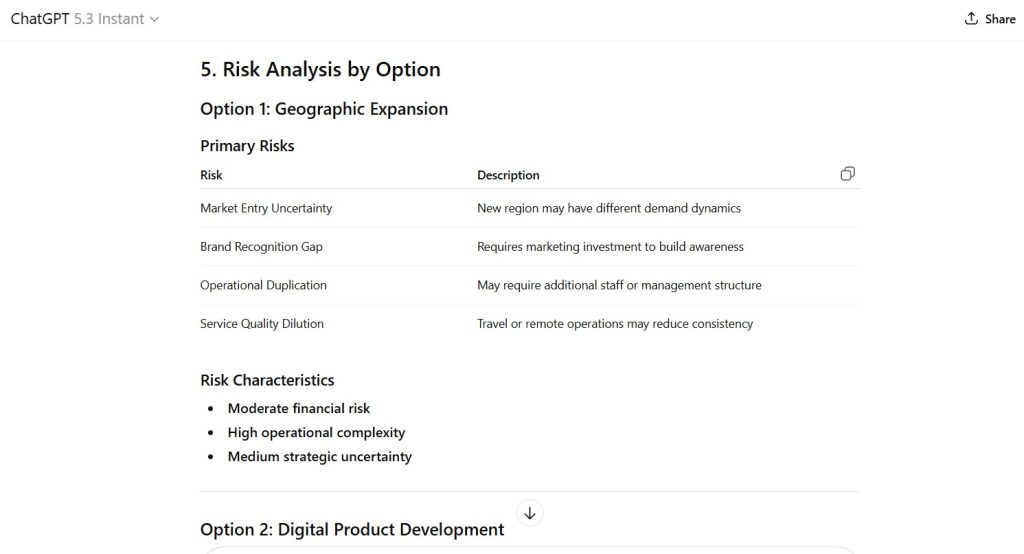

Figure 6 — Risk Analysis by Strategic Option

Documents structured risk identification for geographic expansion, digital product development, and competitor acquisition.

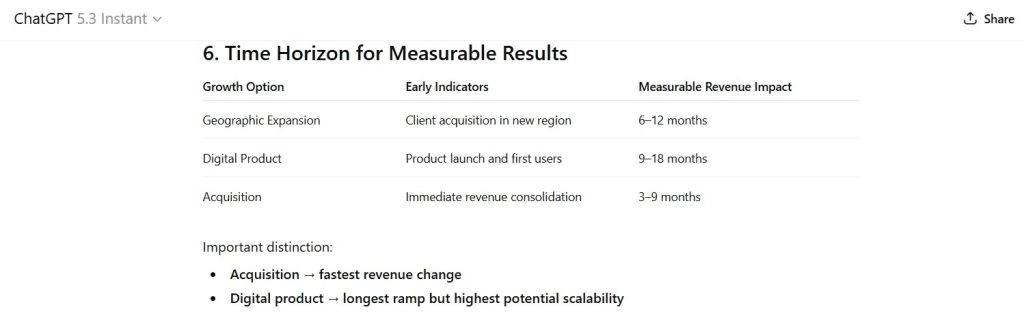

Figure 7 — Time Horizon Analysis

Shows projected timelines for measurable revenue impact across each growth option.

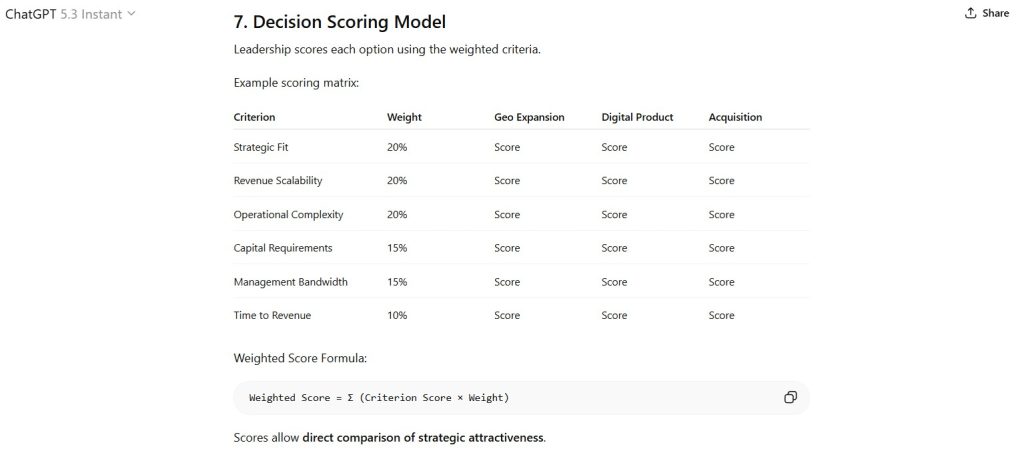

Figure 8 — Decision Scoring Model

Presents the weighted scoring structure used to compare options under the defined evaluation criteria.

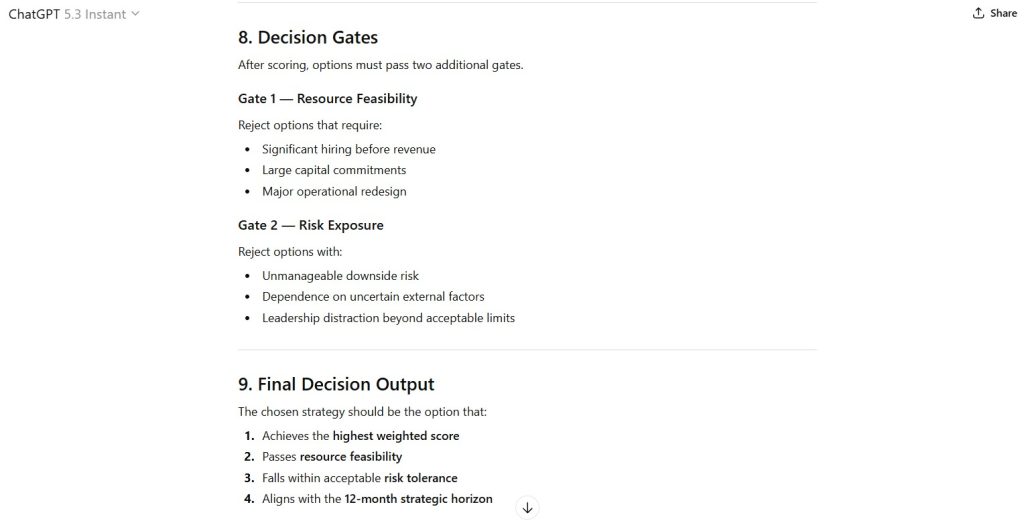

Figure 9 — Decision Gate Structure

Demonstrates the final filtering process requiring options to pass feasibility and risk thresholds.

Capability Domain Evaluated

Cross-Model Stability & Comparative Robustness

This domain tests the model’s ability to:

• Maintain structural consistency in decision frameworks

• Produce repeatable analytical architectures under identical prompts

• Construct comparative reasoning models across competing options

• Preserve logical coherence across multi-stage evaluation processes

Observed Strengths

• Clear multi-stage decision architecture

• Explicit criteria weighting tied to organizational constraints

• Structured risk identification across competing strategies

• Logical sequencing from evaluation criteria to final decision gate

Observed Constraints

• Scoring matrix presented as a conceptual model rather than calculated output

• No quantitative financial projections associated with options

• Risk probabilities not formally estimated

• Scenario sensitivity analysis not performed

Institutional Assessment

The model produced a structured strategic decision architecture designed to compare multiple growth paths under organizational constraints.

The framework integrates weighted evaluation criteria, feasibility assessment, risk analysis, and staged decision gates. The sequence of analysis progresses logically from criteria definition through final decision scoring, demonstrating coherent structural reasoning.

The model maintains internal consistency across evaluation components and preserves alignment with the organizational scenario presented in the prompt.

However, the framework remains conceptual rather than computational. The scoring matrix and risk analysis structures provide decision scaffolding but do not generate quantified comparative outputs.

Within the scope of this evaluation, the model demonstrates structured decision-framework construction while requiring human analysis to execute quantitative modeling.

Performance Classification: Strong

Assessment Status

Locked under Methodology v1.0.

Structural revisions require formal version update.

— First Tier Review

Leave a Reply