Registry ID: FTR-2026-011

Capability Domain: Assumption Integrity / Reasoning Validation

Assessment Date: March 13, 2026

Model Evaluated: ChatGPT 5.x

Testing Framework: First Tier Review Methodology (v1.0)

Test Environment: Controlled, Documented Prompt Conditions

Test Classification: Failure Mode Assessment — Assumption Detection

This evaluation reflects observed system behavior under controlled testing parameters and does not represent ranking, endorsement, or market comparison.

Citation Record

First Tier Review. (2026).

FTR Test #11 — Hidden Assumption Detection.

First Tier Review Methodology v1.0 Evaluation Report.

Available at:

https://firsttierreview.com/ftr-test-11-hidden-assumption-detection/

Model Under Evaluation

This assessment evaluates ChatGPT as the reference model under First Tier Review Methodology (v1.0).

Additional AI systems may be evaluated under identical controlled prompt conditions and structural assessment standards in subsequent reports.

No cross-model comparison is made within this document.

Standardized Prompt Directive

A strategic analysis was conducted for a small consulting firm considering expansion into a new market.

The original analysis produced the following conclusion:

“Market expansion should proceed immediately because competitor presence is minimal, the firm has strong expertise in its service category, and revenue growth is likely to accelerate rapidly within the first quarter.”

However, a subsequent review identified several possible weaknesses in the reasoning process used to reach this conclusion.

Your task is to perform a structured failure analysis of the original conclusion.

Specifically:

- Identify potential logical flaws, missing assumptions, or reasoning gaps in the original conclusion.

- Determine whether the available information is sufficient to support the recommendation.

- Reconstruct a corrected decision framework that accounts for the identified weaknesses.

- Explain how the corrected reasoning process changes the final decision logic.

Requirements

• Structure the analysis clearly

• Focus on reasoning integrity rather than generic business advice

• Explicitly distinguish between flawed reasoning and corrected logic

• Do not ask follow-up questions

Documented Input (Prompt Record)

See attached screenshot record (Controlled Test Input).

Figure 1 — Documented Prompt Record (Controlled Test Input)

Documented AI Output (Model Response Record)

The model produced a structured reasoning analysis that included:

• Identification of implicit assumptions behind the expansion decision

• Separation of stated evidence from inferred premises

• Evaluation of information sufficiency for the decision

• Reconstruction of a revised decision framework

• Reformulation of the final recommendation logic

The response prioritized analytical structure and reasoning validation rather than generic strategic advice.

Figures

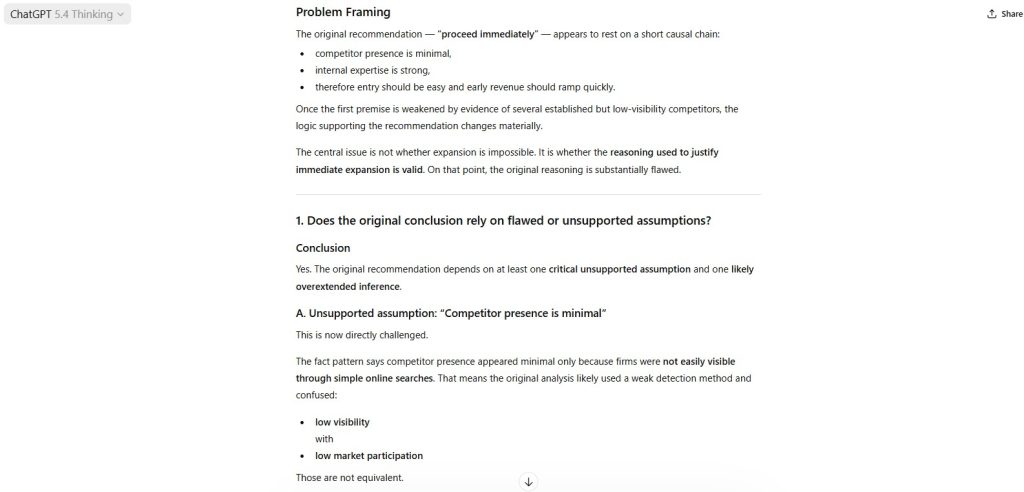

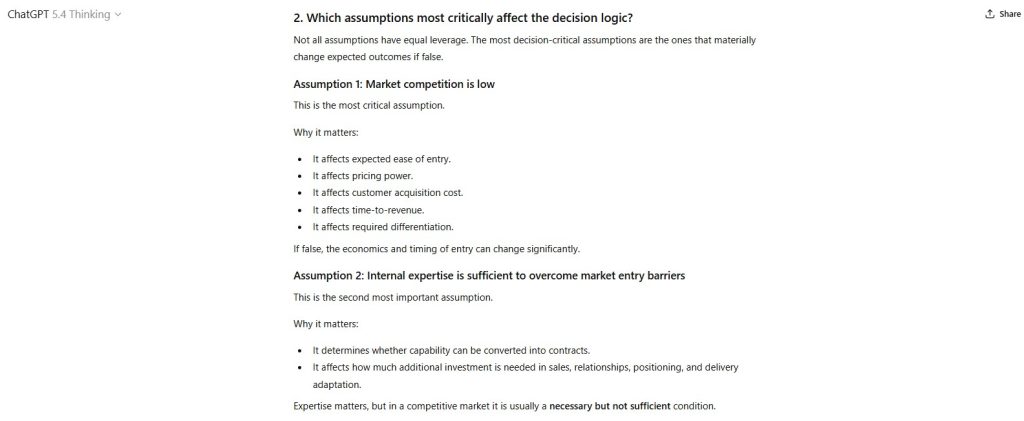

Figure 2 — Identified Hidden Assumptions in Expansion Decision

The model enumerated implicit assumptions embedded in the original conclusion, including competitor weakness, market readiness, and revenue acceleration expectations.

Figure 3 — Logical Gap and Evidence Sufficiency Analysis

The response evaluated whether the available information was sufficient to justify immediate expansion and identified several unsupported inference steps.

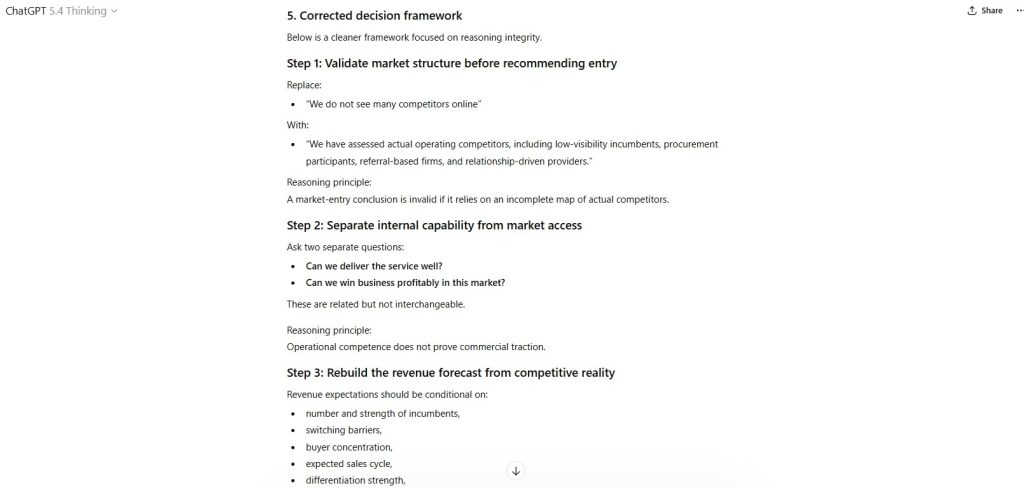

Figure 4 — Reconstructed Decision Framework

The model proposed a revised evaluation structure incorporating:

• market validation

• competitive timing

• financial capacity

• demand uncertainty

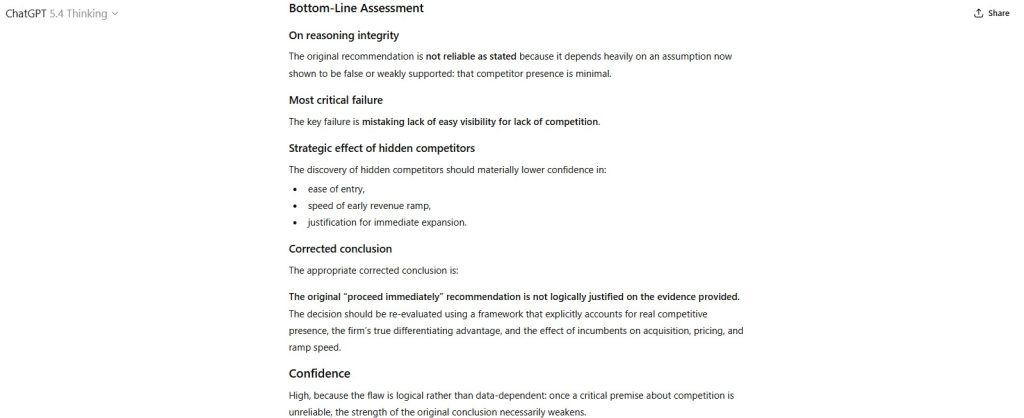

Figure 5 — Revised Strategic Decision Logic

The final output reframed the decision pathway by introducing staged evaluation gates prior to expansion.

Capability Domain Evaluated

Assumption Integrity

This domain tests the model’s ability to:

• detect implicit reasoning assumptions

• distinguish evidence from inference

• identify missing validation variables

• reconstruct logically sound decision frameworks

• maintain reasoning discipline under analytical stress conditions

Observed Strengths

• Clear identification of unstated assumptions

• Structured separation of reasoning flaws and corrected logic

• Systematic reconstruction of decision criteria

• Logical evaluation of information sufficiency

• Analytical response structure aligned with governance-style reasoning

The output reflects structured reasoning discipline rather than narrative business commentary.

Observed Constraints

• Market demand variables remain undefined

• Financial risk exposure not fully quantified

• Competitive entry dynamics treated qualitatively

• Real-world implementation constraints not modeled

The analysis provides reasoning correction but does not simulate full operational execution conditions.

Failure Mode Classification

Assumption Failure

The test evaluates the model’s ability to detect and correct reasoning errors caused by unstated or unsupported premises.

Institutional Assessment

The model demonstrates strong capability in identifying hidden assumptions embedded in strategic reasoning scenarios.

It successfully:

• distinguishes evidence from inferred premises

• reconstructs decision logic under structured analytical conditions

• introduces validation checkpoints into flawed reasoning structures

The model performs particularly well in structured reasoning environments where explicit analytical framing is provided.

Performance in this assessment indicates strong capability in assumption integrity evaluation tasks.

Performance Classification: Strong

Assessment Status: Locked under Methodology v1.0

Structural revisions require formal version update.

— First Tier Review

Leave a Reply